Systematic Literature Review vs Scoping Review: Which Works for Your Research?

Choosing the wrong review type is one of the most costly mistakes a researcher can make — not because it wastes a few hours, but because it can invalidate months of work, frustrate peer reviewers, and derail entire doctoral programs. If you’ve been staring at a blank protocol document wondering whether your research question calls for a systematic review or a scoping review, you’re not alone. These two methodologies sit close together in the academic citation standards and research methodology guides most universities recommend, yet they serve fundamentally different purposes.

Here’s the thing most methodology textbooks gloss over: the choice isn’t about which approach sounds more rigorous. It’s about which one honestly fits what you’re trying to find out. Get that alignment right, and everything downstream — search strategy, eligibility criteria, data extraction, reporting standards — falls into place.

Defining Both Review Types: Core Concepts in Academic Research Methodology

Before comparing the two, it’s worth being precise about what each actually is — because the casual use of “literature review” in academic writing has muddied the waters considerably.

A systematic literature review is a structured, reproducible synthesis of all available evidence on a focused research question. It follows a pre-registered protocol, applies explicit eligibility criteria, critically appraises included studies for methodological quality, and synthesises findings — often quantitatively via meta-analysis. Cochrane and the Centre for Reviews and Dissemination (CRD) at the University of York are the gold-standard authorities on SLR methodology.

A scoping review is a form of evidence synthesis designed to map the extent, range, and nature of evidence on a topic. It does not typically assess methodological quality. Developed by Arksey and O’Malley in 2005 and refined by Levac, Colquhoun, and O’Brien in 2010, the scoping review framework is now standardised through the Joanna Briggs Institute (JBI) methodology.

The JBI Manual for Evidence Synthesis (2024 edition) — the closest thing the field has to a definitive rulebook — distinguishes clearly between these review types based on their objectives, not their methods. Both require systematic searching, documented eligibility criteria, and transparent reporting. What differs is the why behind each step.

What most people miss is that scoping reviews are not “easier” versions of systematic reviews. They answer different questions entirely. Treating one as a shortcut to the other is a methodological error that peer reviewers increasingly flag during manuscript assessment.

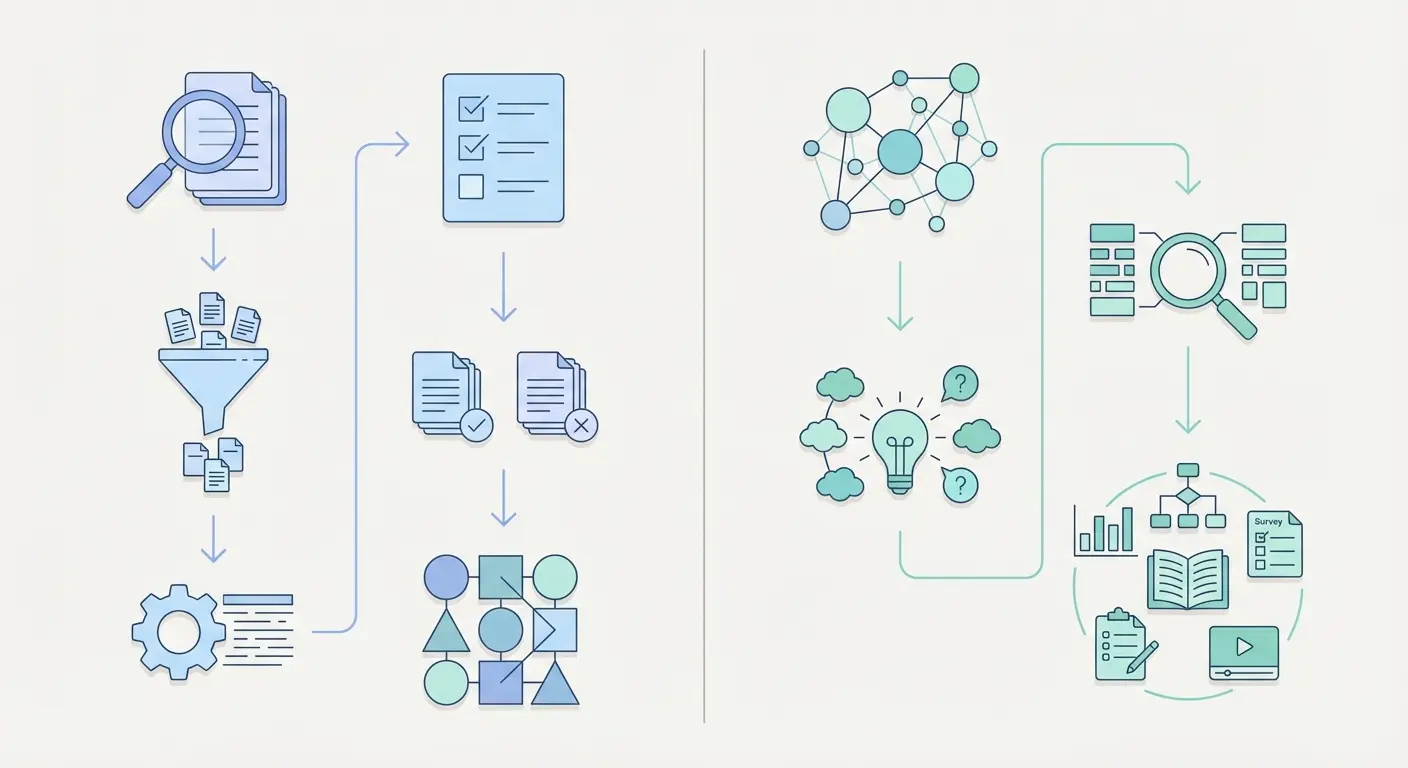

Key Differences: A Side-by-Side Comparison for Researchers

The table below consolidates the structural differences based on current JBI guidance, PRISMA reporting standards, and Cochrane Handbook recommendations. Print it. Bookmark it. Refer to it before you write a single line of your protocol.

| Feature | Systematic Literature Review | Scoping Review |

|---|---|---|

| Primary Purpose | Answer a specific question; synthesise evidence | Map evidence breadth; identify gaps and concepts |

| Research Question Type | Narrow, focused, PICO/PICOS formatted | Broad, exploratory, often multi-part |

| Quality Appraisal | Mandatory; uses validated tools (CASP, Cochrane RoB) | Not required (optional for context) |

| Synthesis Method | Meta-analysis or narrative synthesis; pooled conclusions | Descriptive/narrative; evidence mapping |

| Reporting Standard | PRISMA 2020 (27-item checklist) | PRISMA-ScR (20-item checklist + 2 for protocol) |

| Protocol Registration | Expected (PROSPERO); deviation must be justified | Recommended (Open Science Framework or PROSPERO) |

| Study Design Eligibility | Usually restricted (e.g., RCTs only) | Typically broad; all study designs considered |

| Typical Timeframe | 12–24 months for a rigorous SLR | 6–12 months, depending on scope |

| Conclusion Type | Definitive recommendations; clinical/policy guidance | Identifies gaps; recommends further research |

| When Field is Emerging | Often premature; insufficient primary studies | Ideal — maps what exists without synthesising prematurely |

When to Choose a Systematic Literature Review

A systematic review earns its complexity. The methodological overhead — pre-registered protocol, dual screening, quality appraisal, GRADE assessment — only pays off when the conditions are right. Here’s where it gets interesting: many researchers pursue SLRs when a scoping review would have served them better, then find themselves unable to draw any meaningful conclusions because the evidence base is too heterogeneous.

Choose a systematic review when:

- A focused, answerable question exists. If your question can be structured using PICO (Population, Intervention, Comparator, Outcome) or a derivative framework like PICOS or PEO, an SLR is likely appropriate. Vague questions produce unmanageable inclusion criteria.

- Sufficient primary research has already been published. A field with fewer than 15–20 primary studies on a specific question usually can’t support a meaningful meta-analysis or even a robust narrative synthesis.

- The goal is clinical, policy, or practice guidance. Cochrane systematic reviews inform NICE guidelines in the UK and AHRQ guidelines in the US precisely because they aggregate the best available evidence toward a decision-relevant conclusion.

- Methodological quality of studies needs critical appraisal. If you need to know not just what studies found, but whether to trust those findings, quality appraisal is non-negotiable — and that’s the SLR’s strength.

- A definitive answer — or the honest absence of one — is the output. The SLR’s conclusion might be “the evidence is insufficient to recommend X,” which is itself a policy-relevant finding.

For doctoral candidates, it’s also worth noting that supervisors and examination boards increasingly expect SLRs to be registered on PROSPERO before data extraction begins. Post-hoc registration is a red flag. If you want practical guidance on designing your overall research strategy before committing to a review type, the Research Methodology Guide 2026 covers paradigm selection, study design, and ethics frameworks that feed directly into review planning.

When to Choose a Scoping Review

Scoping reviews have moved from methodological curiosity to mainstream research output faster than almost any other synthesis method. A 2021 paper in Systematic Reviews (BMC) documented exponential growth in published scoping reviews — a trend that reflects genuine demand rather than methodological fashion.

The question researchers need to ask honestly: Am I trying to answer a question, or am I trying to understand a territory?

Choose a scoping review when:

- The research question is broad or exploratory. “What interventions have been studied for improving academic integrity in higher education?” is a scoping question. “Do honour codes reduce plagiarism rates in undergraduate students?” is a systematic review question.

- The field is new or rapidly evolving. Emerging topics — think AI-generated text detection, post-pandemic pedagogy, or new diagnostic technologies — benefit from evidence mapping before anyone attempts synthesis.

- You need to identify gaps before commissioning primary research. Funding bodies, including NIHR in the UK and NIH in the US, routinely require scoping reviews as prerequisites for larger research programmes.

- The evidence base is heterogeneous by design. When the relevant literature spans disciplines, methodologies, and grey literature sources, the quality-appraisal requirement of SLRs becomes a barrier rather than a filter.

- Conceptual clarification is the goal. If key terms in your field are used inconsistently across studies — a problem rampant in social sciences and education research — a scoping review can chart definitional variation systematically.

Watch this Evidence Synthesis Ireland presentation by Heather Colquhoun on scoping review steps and methodology — it’s one of the clearest 30-minute explanations available, and Colquhoun co-authored the seminal 2010 framework refinement paper.

A counterintuitive insight worth sitting with: choosing a scoping review is sometimes the more rigorous decision, not the less rigorous one. Conducting an SLR when a scoping review is methodologically appropriate produces an artificial narrowing of the evidence that distorts the true state of a field.

PRISMA Reporting Standards: SLR vs Scoping Reviews in Academic Research

Reporting transparency is where the rubber meets the road. Both review types have dedicated PRISMA frameworks, and journals are increasingly rejecting manuscripts that don’t adhere to the correct one.

For systematic reviews: PRISMA 2020 (Page et al., 2021) is the current standard. The 27-item checklist covers protocol registration, search strategy documentation (including database names, search strings, and date ranges), PRISMA flow diagram, risk of bias assessment, synthesis methods, and certainty of evidence using GRADE. Cochrane’s Handbook for Systematic Reviews of Interventions (Version 6.4) remains the most authoritative operational guide.

For scoping reviews: The PRISMA Extension for Scoping Reviews (PRISMA-ScR), published by Tricco et al. in 2018, is the mandatory reporting framework. It adapts 20 of PRISMA’s 27 items and adds two scoping-specific items. The PRISMA-ScR fillable checklist is available directly from the PRISMA statement site — download it and complete it alongside your manuscript, not after.

What both frameworks share:

- A PRISMA flow diagram showing records identified, screened, assessed for eligibility, and included

- Full documentation of database search strings (reproducibility requirement)

- Clear eligibility criteria stated in advance

- Dual independent screening with documented inter-rater reliability (Cohen’s kappa ≥ 0.60 is typically expected)

For deep guidance on making your search strategy reproducible and your PRISMA documentation audit-ready, the Research Methodology Reproducibility Tips resource covers transparent reporting, data management, and documented search strategies for both review types.

You can also access the JBI Manual for Evidence Synthesis (2024 edition) for the most current methodological standards, particularly the chapters on scoping reviews and review type selection guidance.

Citation Standards and Documentation in Evidence Synthesis

Both systematic and scoping reviews carry unusually demanding citation requirements compared to other research outputs — and for good reason. When another researcher wants to replicate or update your review, your reference list is their starting point.

Here’s what distinguishes citation practice in review methodology from standard academic writing:

- Reference management software is non-negotiable. Zotero, Mendeley, Endnote, and RefWorks are the most commonly used tools. Exporting search results from Web of Science, Scopus, PubMed, and CINAHL directly into these platforms — with deduplication — is standard practice. Manual reference management for reviews including 2,000+ records is not feasible.

- Grey literature must be cited correctly. Government reports, conference proceedings, dissertations (via ProQuest or institutional repositories), and preprints (bioRxiv, SSRN, OSF Preprints) are all eligible for inclusion in scoping reviews and sometimes in SLRs. APA 7th edition provides specific formats for each source type — the American Psychological Association’s Publication Manual (7th ed., 2020) is the definitive reference for this.

- Database search documentation counts as a citation. Your methodology section should include the exact search date, database names (with ISSN or DOI identifiers where available), and number of records retrieved. This is a PRISMA requirement and a reproducibility standard.

For a detailed breakdown of how APA 7th, MLA 9th, Chicago 17th, and Harvard referencing apply across different source types encountered in evidence synthesis, the Research Methodology: Standardise Citations 2025 guide covers these standards with specific examples relevant to academic reviews.

One practical note on citation standards that often trips up early-career researchers: excluded studies do not appear in your reference list. Only included studies — those that passed all eligibility criteria — are cited in the body of a review. Studies excluded after full-text assessment are listed in a supplementary appendix with reasons for exclusion. This distinction matters for audit trails.

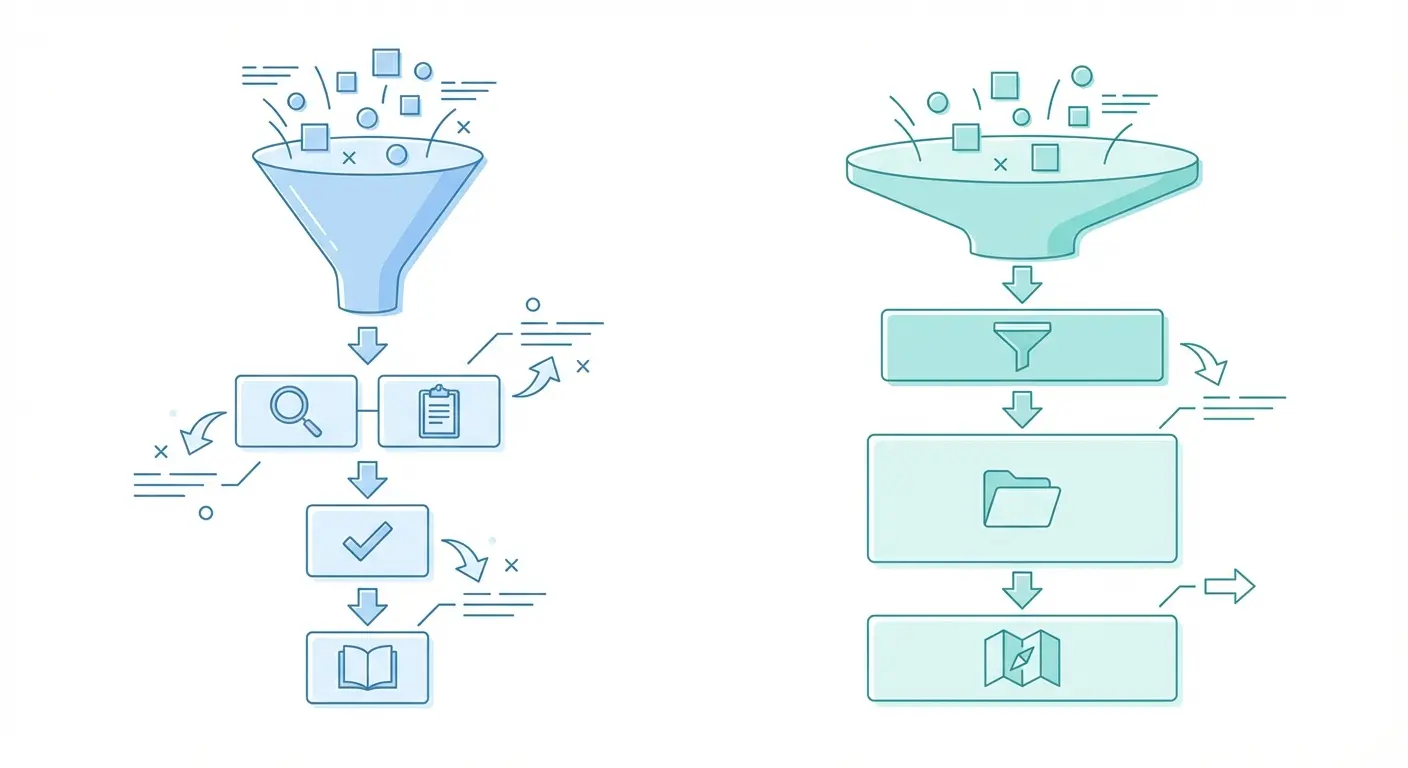

Decision Framework: Step-by-Step Guide to Choosing the Right Review Type

Fair warning: this takes honest self-assessment. Researchers sometimes gravitate toward whichever review type their supervisor last published, or whichever seems most publishable in their target journal. Neither is a sound methodological basis. Work through these steps before committing.

-

State your research question in one sentence.

If you can’t do this, you’re not ready to design a review of either type. Vague questions produce vague reviews. Write the question. Show it to a colleague. If they ask “but what specifically?” — refine it. -

Apply the PICO test.

Can your question be mapped to Population, Intervention/phenomenon, Comparator, Outcome? If yes, and all four elements are clearly defined, an SLR is likely appropriate. If you’re struggling to define a comparator or a specific outcome, a scoping review is probably a better fit. -

Conduct a preliminary search on Google Scholar, PubMed, and Web of Science.

Spend two hours searching. How many directly relevant primary studies exist? If you’re finding fewer than 15 studies that squarely address your question, an SLR will likely conclude “insufficient evidence” — which may be a valid finding, but consider whether a scoping review would provide more actionable insight. -

Check for existing reviews on PROSPERO and the Cochrane Library.

If a rigorous SLR on your exact question was published in the past five years, you probably don’t need to replicate it. An updated scoping review or a living systematic review might be warranted instead. Duplicate reviews without justification are increasingly rejected by journals. -

Identify your output goal.

Will your review inform a clinical protocol, a policy brief, a funding application, or a doctoral thesis chapter? Clinical and policy applications typically require SLR-level evidence. Doctoral thesis introductions, emerging field overviews, and research agenda papers often suit scoping reviews. -

Consult your target journal’s author guidelines.

Many journals in health, education, and social sciences now specify which review types they accept and which reporting frameworks they require. Don’t design a review and then discover the journal won’t consider it. -

Register your protocol.

Once the decision is made: PROSPERO for systematic reviews (free, NIHR-funded); Open Science Framework for scoping reviews. Registration locks in your eligibility criteria and prevents outcome reporting bias — a genuine concern in evidence synthesis.

For a broader understanding of how these decisions fit within the overall research design process — including paradigm selection, sampling strategy, and ethical approval considerations — the Research Methodology Guide 2026 provides an end-to-end framework that contextualises review methodology within larger research programmes.

Tools and Software for Systematic and Scoping Reviews

The right tool won’t do the thinking for you — but it will save you from drowning in spreadsheets when you’re managing 3,000 records across two reviewers.

Screening Tools

Rayyan is the most widely adopted free screening platform for both systematic and scoping reviews. It supports blind dual reviewer screening, conflict resolution, and PRISMA flow diagram generation. For teams working on NIHR or Cochrane reviews, Covidence is the institutional standard — though it requires a paid licence.

Reference Management

Zotero (free, open-source) integrates with Word, Google Docs, and all major browsers. Endnote remains dominant in medical and health science institutions. Both support APA 7th, MLA 9th, Chicago 17th, and Harvard output formats — critical for maintaining citation consistency across the review’s reference list.

Data Extraction and Charting

For scoping reviews, the “charting” step (equivalent to data extraction in SLRs) is typically managed in Excel or structured Google Sheets templates. The JBI Manual provides a data charting form template. For SLRs with meta-analytic components, RevMan (Review Manager, Cochrane’s free software) handles forest plot generation and risk of bias visualisation.

Search Documentation

CADTH’s Peer Review of Electronic Search Strategies (PRESS) checklist is the gold standard for ensuring your search strategy is methodologically sound before running it. Many journals and PROSPERO now require peer-reviewed search strategies for SLR submissions — a practice that’s increasingly being applied to scoping reviews as well.

For accessible video-based introductions to both review types, the Cochrane Collaboration’s explanation of what systematic reviews are and this overview of what scoping reviews involve are worth 15 minutes of any researcher’s time. They’re particularly useful for explaining the distinction to postgraduate students at the beginning of a research methods course.

The 2021 BMC Systematic Reviews paper on reinforcing and advancing scoping review methodology provides a rigorous discussion of ongoing debates in the field — particularly around quality appraisal, consultation with knowledge users, and the distinction between scoping reviews and other evidence mapping methods.

Frequently Asked Questions

Can a scoping review replace a systematic review for a PhD thesis?

A scoping review can absolutely serve as a standalone thesis chapter or component, but it should not replace a systematic review if the research question genuinely calls for one. The key is methodological justification: if you choose a scoping review, your thesis must clearly articulate why a scoping approach — rather than an SLR — best addresses your research question. Examiners increasingly scrutinise this rationale, particularly in health sciences and education research doctoral programmes.

What is the difference between a literature review and a systematic review?

A traditional narrative literature review is an unsystematic, author-selected summary of relevant publications — subject to selection bias and lacking explicit methodology. A systematic review follows a pre-registered protocol, uses a reproducible search strategy across multiple databases, applies explicit eligibility criteria, and critically appraises study quality. The distinction matters enormously: journal editors and grant reviewers treat them as fundamentally different evidence products, with systematic reviews carrying significantly greater evidential weight.

Do scoping reviews require quality appraisal of included studies?

According to the JBI Methodology for Scoping Reviews (2020, updated 2024), critical appraisal is not a standard requirement in scoping reviews because the goal is mapping evidence breadth, not appraising quality. However, JBI notes that authors may choose to include quality appraisal as an optional component if it adds context to the review’s findings. If quality appraisal is included, it must be reported transparently and must not be used as an exclusion criterion — otherwise, you’ve changed the review type.

What reporting framework should I use for a scoping review?

The PRISMA Extension for Scoping Reviews (PRISMA-ScR), published by Tricco et al. in 2018 in the Annals of Internal Medicine, is the current reporting standard for scoping reviews. It contains 20 essential items and 2 recommended items specifically for protocols. Most journals in health, education, and social sciences that publish scoping reviews now require PRISMA-ScR compliance as a condition of peer review. The EQUATOR Network maintains the most up-to-date version of the checklist and accompanying explanation document.

How many databases should I search for a systematic or scoping review?

Cochrane guidance recommends searching at least two major databases for systematic reviews, with most rigorous SLRs searching five or more (typically MEDLINE/PubMed, Embase, CINAHL, PsycINFO, and Web of Science, plus grey literature sources). For scoping reviews, the JBI Manual recommends a similarly broad search, often including discipline-specific databases relevant to the topic. Single-database searches are considered methodologically inadequate for either review type and will typically result in rejection from peer-reviewed journals.

Should I register my scoping review protocol before starting?

Protocol registration is strongly recommended for scoping reviews, though it’s not yet universally mandated as it is for systematic reviews. The Open Science Framework (OSF) is the most commonly used platform for scoping review protocol registration. PROSPERO began accepting scoping review protocols in 2018. Registering your protocol before data collection demonstrates methodological rigour, reduces the risk of outcome reporting bias, and is increasingly expected by high-impact journals in health sciences, education, and social research.

Deepen Your Research Methodology Knowledge

Understanding the systematic literature review vs scoping review distinction is one piece of a larger methodological puzzle. The decisions you make before you run a single database search — your paradigm, your research design, your citation protocols — shape everything that follows.

- Explore the Research Methodology Guide 2026 for end-to-end guidance on research design, paradigm selection, and ethics — the foundations that should precede any evidence synthesis work.

- Standardise your citation practice with the Research Methodology: Standardise Citations 2025 guide, covering APA 7th, MLA 9th, Chicago 17th, and Harvard for every source type you’ll encounter in a review.

- Make your review replicable with the Research Methodology Reproducibility Tips — practical guidance on transparent search documentation, data management, and PRISMA-compliant reporting.

If this resource helped clarify your methodology decision, share it with a colleague or link to it from your institutional research portal — it’s the kind of reference that earns its place in a methods reading list.

Leave a Reply