Thematic Analysis in Research: Braun & Clarke’s 6-Step Framework with Examples (2026)

Thematic analysis research is one of the most widely used qualitative methods across psychology, education, health sciences, and the social sciences — yet it is also one of the most commonly misapplied. Virginia Braun and Victoria Clarke’s framework, first systematised in their landmark 2006 paper and substantially refined in their 2019 and 2022 works, offers researchers a rigorous yet flexible approach to identifying patterns of meaning in qualitative data. Whether you are analysing interview transcripts, focus group recordings, or open-ended survey responses, understanding the six phases correctly is the difference between a dissertation that impresses examiners and one that attracts fundamental criticism.

This guide walks through every phase of Braun and Clarke’s approach, compares it against related methods, presents two fully worked examples, and addresses the quality criteria and common errors that doctoral examiners flag most often.

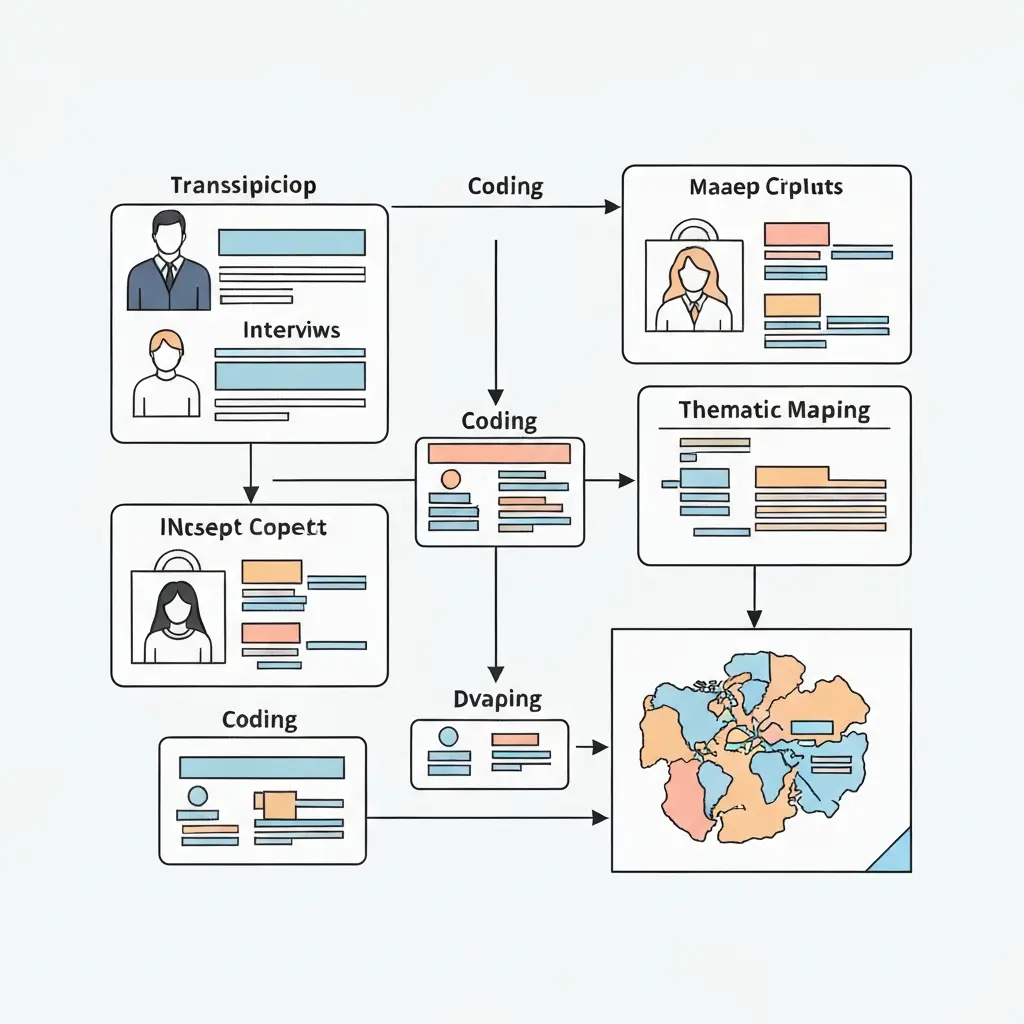

Thematic analysis (Braun & Clarke) is a qualitative method for identifying, analysing, and reporting patterns (themes) across a dataset. It proceeds through six phases: familiarisation, initial coding, searching for themes, reviewing themes, defining and naming themes, and producing the report. In its reflexive form (2019), researcher subjectivity is treated as a resource, not a threat to validity. It suits most qualitative datasets and does not require a specific theoretical framework.

What Is Thematic Analysis?

Thematic analysis (TA) is a method for systematically identifying, analysing, and interpreting patterns of meaning — called themes — across a qualitative dataset. Unlike grounded theory or interpretative phenomenological analysis (IPA), TA is not tied to a particular epistemological framework, which makes it one of the most versatile tools in the qualitative researcher’s toolkit.

Braun and Clarke’s 2006 paper “Using thematic analysis in psychology” (published in Qualitative Research in Psychology) is one of the most-cited papers in the social sciences, with over 150,000 citations as of 2026. It codified what had previously been an informal, often underdescribed practice into a clear, teachable six-phase process.

A theme, in this framework, captures “something important about the data in relation to the research question, and represents some level of patterned response or meaning within the data set” (Braun & Clarke, 2006, p. 82). Critically, a theme is not simply a topic that appears frequently — frequency is neither necessary nor sufficient for something to qualify as a theme. A theme must carry analytical weight and tell the reader something meaningful about the phenomenon under study.

Semantic vs Latent Themes

Braun and Clarke distinguish between two levels of analysis:

- Semantic themes remain close to the surface meaning of the data. They describe what participants explicitly said without deeper interpretation.

- Latent themes engage with the underlying assumptions, ideologies, or conceptual framings implicit in the data — requiring a greater degree of researcher interpretation.

Most strong dissertations combine both levels, using semantic themes to organise surface patterns and latent analysis to generate genuine theoretical contribution.

Inductive vs Deductive Orientation

TA can be conducted inductively (themes emerge from the data with no preconceived analytical framework) or deductively/theoretically (the researcher is guided by prior theoretical commitments or research questions). Braun and Clarke advise researchers to be explicit about their orientation because it shapes every decision from Phase 1 onward.

Reflexive vs Codebook vs Coding Reliability Approaches

By 2019, Braun and Clarke had identified a proliferation of approaches all calling themselves “thematic analysis” but operating under very different assumptions. They mapped these into three broad families:

| Dimension | Reflexive TA (Braun & Clarke) | Codebook TA | Coding Reliability TA |

|---|---|---|---|

| Epistemology | Constructivist / interpretivist | Post-positivist to interpretivist | Post-positivist / (neo-)positivist |

| Researcher role | Active meaning-maker; subjectivity is a resource | Co-developer of shared framework | Neutral coder; subjectivity is a threat |

| Coding process | No codebook; codes develop iteratively from researcher–data engagement | Shared codebook developed collaboratively, then applied | Pre-defined codebook; inter-rater reliability (IRR) measured |

| Inter-rater reliability | Not appropriate; rejected as a quality marker | Sometimes used as a consistency check | Central; reported as Cohen’s kappa or percentage agreement |

| Typical disciplines | Psychology, social sciences, health qualitative research | Health services research, policy evaluation (framework analysis) | Content analysis traditions, media studies, some health research |

| Theme as | Researcher’s interpretive construction | Shared analytic category | Observable category in the data |

| Sample sizes | Small to medium (10–30 typical) | Medium to large | Large; statistical power considerations apply |

| Key reference | Braun & Clarke (2006, 2019, 2022) | Ritchie & Spencer (1994); Brooks et al. (2015) | Guest et al. (2012); Boyatzis (1998) |

Braun & Clarke’s 6 Phases: Detailed Walkthrough

Braun and Clarke consistently describe these as “phases” rather than “steps” to emphasise that the process is iterative and recursive, not linear. You will return to earlier phases as your analysis develops. The six phases apply to both the original 2006 framework and its reflexive evolution (2019).

Phase 1: Familiarisation with the Data

Familiarisation means immersing yourself in the dataset before any systematic coding begins. For interview data, this involves transcribing recordings yourself (where possible), reading transcripts multiple times, and noting initial impressions and ideas in a reflexive journal.

What to do:

- Transcribe audio or video data verbatim, including pauses, false starts, and laughter where relevant (Jefferson notation is not required unless doing conversation analysis)

- Read all transcripts at least twice — once for general familiarity, once with research questions in mind

- Write initial analytic memos noting what strikes you, what surprises you, and what potential patterns you observe

- Note emotional responses and preconceptions in your reflexive journal — these will shape your analysis and must be acknowledged

Common error: Jumping straight to coding without genuine familiarisation produces superficial analysis. Braun and Clarke (2022) note that poor familiarity is one of the most common problems they see in submitted manuscripts.

Phase 2: Generating Initial Codes

Coding is the process of identifying and labelling features of the data that are relevant to your research question. In reflexive TA, codes are not pre-defined — they emerge from your close reading of the data.

What to do:

- Code every extract of data that appears potentially relevant — over-inclusive is better than under-inclusive at this stage

- Assign short, data-driven labels that capture the essence of an extract (e.g., “fear of judgment from GP”, “relief after disclosure”)

- Code the same extract with multiple codes if appropriate — one extract can carry several meanings

- Keep codes close to the data language; avoid importing theoretical jargon at this stage

- Collate all data relevant to each code into a working document

Example: From an interview extract — “I kept thinking, what will the doctor think of me? I wasn’t ready to be judged” — you might generate codes: [fear of medical judgment], [not ready to disclose], [anticipated stigma], [gatekeeper anxiety].

Phase 3: Searching for Themes

Once you have a full set of initial codes, you sort them into potential themes. Think of this as clustering — you are looking for codes that, taken together, capture something analytically coherent and meaningful.

What to do:

- Arrange your codes into potential themes by grouping codes that share a common conceptual thread

- Create a thematic map — a visual diagram showing proposed themes, sub-themes, and their relationships

- Distinguish between main themes and sub-themes: a sub-theme captures a dimension or facet of the broader theme

- Some codes may not fit any theme — retain them in a “miscellaneous” pile for now

- Look for candidate themes that cut across multiple participants, not just one or two

A thematic map at this phase is provisional — it is a thinking tool, not a finished product.

Phase 4: Reviewing Themes

Phase 4 involves refining your candidate themes by checking them against the coded data extracts and then against the full dataset. Braun and Clarke describe this as a two-level review.

Level 1 review: Read all the data extracts coded under each proposed theme. Does the theme cohere? Does it capture a meaningful, bounded pattern? If a theme contains extracts that don’t fit together, you need to split it or reconsider.

Level 2 review: Step back and read the full transcripts again. Does your thematic map accurately represent the dataset as a whole? Are there significant aspects of the data that your current themes fail to capture? Are two proposed themes actually the same thing?

At the end of Phase 4, you should have a refined thematic map that you are confident represents the data.

Phase 5: Defining and Naming Themes

Each theme now needs a clear, concise name and a detailed definition that captures its essence and scope. This is where you do the real analytical work of explaining what each theme is about, why it matters, and how it relates to your research question.

What to do:

- Write a detailed analytic narrative for each theme — not a summary of what participants said, but an interpretation of what the theme means

- Choose theme names that are informative and evocative: “Navigating institutional barriers to disclosure” is more analytical than “Problems telling people”

- Identify the best data extracts (quotations) that will illustrate each theme in the written report

- Write a “theme definition” — a 2–3 sentence statement of what the theme captures and what it excludes

- Consider the relationships between themes: are they hierarchical, sequential, or conceptually overlapping?

Phase 6: Producing the Report

The final phase is writing up the analysis. In reflexive TA, the write-up is not simply a presentation of quotes — it is a scholarly argument that uses data extracts as evidence for analytic claims.

What to do:

- Write a narrative for each theme that weaves together analytic argument and illustrative quotations

- Each quotation should be contextualised, introduced, and followed by interpretive commentary — never let quotes “speak for themselves”

- A theme section typically follows the structure: claim → evidence (quote) → interpretation → connection to broader argument

- Include a methods section that explains your analytic approach, your positionality, and your reflexive engagement with the data

- Present a final thematic map or table of themes to orient the reader

Worked Example 1: Psychology Interview Study

This example is drawn from a hypothetical but representative study — modelled on published reflexive TA research — to illustrate all six phases in practice.

Study context: A qualitative study exploring young adults’ (ages 18–25) experiences of seeking help for anxiety from their GP. Data: 12 semi-structured interviews, transcribed verbatim. Research question: How do young adults navigate the decision to seek professional help for anxiety?

Phase 1 in Practice

The researcher transcribed all 12 interviews personally, taking approximately 8 hours per 1-hour interview. Reflexive memos noted a strong recurring sense of shame in the accounts, and personal resonance with participants’ descriptions of “putting on a front”. This was recorded in the reflexive journal to be addressed in the methods chapter.

Phase 2 in Practice

Initial coding generated approximately 180 codes across the 12 transcripts. Codes included: [minimising symptoms to self], [comparing severity to others], [catastrophising GP’s reaction], [relief after finally booking], [using physical symptoms as entry point], [peer normalisation], [family dismissal of mental health], [digital pre-research], [waiting for a crisis point].

Phase 3 in Practice

Codes were sorted into five candidate themes on a thematic map:

- Candidate Theme A: Internalised stigma as a barrier to action

- Candidate Theme B: The role of social comparison in delaying help-seeking

- Candidate Theme C: The GP as a threatening gatekeeper

- Candidate Theme D: Digital information-gathering as preparatory work

- Candidate Theme E: Crisis as the threshold for action

Phase 4 in Practice

Level 1 review revealed that Candidate Themes A and B substantially overlapped — both captured how participants benchmarked their own distress against others to justify inaction. They were merged into a single theme: “Negotiating legitimacy: why my anxiety isn’t bad enough.” Candidate Theme D had thin data support (only 3 participants mentioned it prominently) and was downgraded to a sub-theme within Theme C.

Phase 5 in Practice

Final themes and definitions:

- “Negotiating legitimacy” — captures how participants minimised their own distress through social comparison and cultural narratives about what “real” mental illness looks like, positioning themselves as unworthy of professional help.

- “The GP as threatening gatekeeper” — captures anticipated judgment, disbelief, or dismissal from the GP, including preparatory strategies (researching symptoms, preparing scripts) to manage this threat. Includes sub-theme: digital preparation as armour.

- “Waiting for the crisis point” — captures the pattern whereby participants required a moment of acute distress or functional breakdown before they felt their anxiety was “serious enough” to warrant a GP appointment.

- “Relief and recalibration after disclosure” — captures the unexpected positive reappraisal that followed actually speaking to the GP, including revisions of earlier beliefs about judgment and legitimacy.

Phase 6 in Practice

The findings chapter was structured with one section per theme. Each section opened with the analytic claim, proceeded through 2–3 illustrative quotations with commentary, and closed by connecting the theme to existing literature on help-seeking behaviour and stigma. The report noted the researcher’s own positionality as a young adult with prior experience of anxiety-related help-seeking and how reflexive journaling was used to distinguish personal resonance from analytical interpretation.

Worked Example 2: Education Focus Group Study

Study context: A focus group study exploring secondary school teachers’ perceptions of AI-assisted assessment tools and their implications for academic integrity. Data: 3 focus groups of 5–6 teachers each (total n=17). Research question: How do secondary teachers make sense of AI detection tools in relation to their professional judgements about student integrity?

Summary of Analytic Process

Familiarisation: The researcher conducted all three focus groups and transcribed them within 48 hours. Memos noted that teachers frequently used language of “trust” and “betrayal,” suggesting an emotional as well as practical dimension to the issue.

Initial coding: 140 codes generated, including: [detection tools as unreliable], [AI as threat to teacher expertise], [student relationship as fragile], [institutional pressure to act], [fairness concerns about detection], [pastoral vs disciplinary role conflict], [AI as symptom of wider student disengagement], [generational gap in AI literacy].

Thematic map (final, after review):

- Theme 1: “Undermined expertise” — teachers perceived AI detection tools as implying that their professional judgement about student work was insufficient or untrustworthy.

- Theme 2: “The surveillance paradox” — while teachers wanted to detect dishonesty, they reported discomfort with a surveillance-oriented pedagogical culture that AI detection normalised.

- Theme 3: “Relational collateral damage” — teachers described how using AI detection had damaged or risked damaging their pastoral relationships with students, particularly where detection was contested.

Notable analytical decision: A fourth candidate theme — “Generational AI literacy gap” — was abandoned after Phase 4 review because it was largely descriptive and did not carry sufficient analytic weight. Rather than force it, the researcher noted this pattern in the discussion section without elevating it to theme status.

This is a key reflexive TA principle: not every interesting observation in the data needs to be a theme.

When to Use Thematic Analysis vs Other Methods

Selecting the right qualitative research methods requires understanding what each approach can and cannot deliver. Here is how reflexive TA compares to the three methods most often confused with it:

| Criterion | Reflexive TA | Grounded Theory | Content Analysis | IPA |

|---|---|---|---|---|

| Primary goal | Patterns of meaning across a dataset | Generate substantive theory from data | Systematic description of content | Individual lived experience in depth |

| Sample size | 10–30 typical | 20–60+ (theoretical saturation) | Large corpora common | 3–10 (idiographic focus) |

| Theory requirement | None required; theory-flexible | Generates theory; requires theoretical sampling | Can be theory-driven or inductive | Phenomenological philosophy required |

| Can use frequency/counts | No (not in reflexive form) | No | Yes — often quantifies | No |

| Best suited to | Most qualitative questions about what/how/why people experience or do things | Under-theorised phenomena where a new framework is needed | Systematic analysis of large text corpora; policy documents, media | In-depth study of how a small number of individuals make sense of a specific experience |

| Key limitation | Does not generate theory; does not yield the depth of IPA | Highly demanding; requires multiple data collection rounds | Can lose interpretive nuance; may be overly descriptive | Cannot generalise across participants; very small samples |

When to choose reflexive TA: Use it when you have a qualitative dataset of reasonable size (8–30 participants is common), when your research question asks about shared experiences, practices, or meanings across a group, and when you want analytic flexibility without committing to a highly prescribed methodology. It is an excellent choice for dissertation research because it is well-documented, widely understood by examiners, and does not require years of methodological specialisation.

Consult your dissertation methodology chapter guidance for how to justify your choice of method within your broader research methodology framework.

Software Tools for Thematic Analysis

Computer-Assisted Qualitative Data Analysis Software (CAQDAS) does not do the analysis for you — it manages data organisation, coding, and retrieval. The analytical thinking remains yours. Here are the three tools most commonly used for thematic analysis in academic research:

NVivo (Lumivero)

NVivo is the market-leading CAQDAS tool in English-speaking academic institutions. It supports manual, automatic, and hybrid coding approaches, integrates with survey and social media data, and offers visual outputs including word clouds, cluster analysis, and matrix coding queries. It is available via institutional licence at most UK, Australian, and North American universities.

- Best for: Large projects; mixed-methods dissertations integrating quantitative data; researchers who will use CAQDAS extensively in their career

- Limitation: Steep learning curve; expensive without institutional access; can encourage over-coding and under-analysis if used uncritically

- Platform: Windows, Mac

ATLAS.ti

ATLAS.ti is particularly strong for network analysis and visualising conceptual relationships between codes and themes. It supports grounded theory, thematic analysis, and discourse analysis workflows. Version 24 (2026) includes AI-assisted coding, though Braun and Clarke caution against over-reliance on automated coding in reflexive TA.

- Best for: Researchers who want to map conceptual relationships; projects with complex multi-source data

- Limitation: AI-assisted coding is “AI-assisted manual” rather than genuinely autonomous; interface can be complex for first-time users

- Platform: Windows, Mac, web browser

Dedoose

Dedoose is a cloud-based, browser-accessed tool developed by researchers at UCLA. At approximately £12–18/month, it is the most accessible option for students without institutional NVivo licences. It supports qualitative and mixed-methods coding, excerpting, and chart generation.

- Best for: Budget-conscious dissertation students; distributed research teams; straightforward thematic analysis without mixed-methods complexity

- Limitation: Fewer advanced features than NVivo or ATLAS.ti; requires internet connection; data security considerations for sensitive data

- Platform: Web browser (any OS)

Quality Criteria: Braun & Clarke’s 15-Point Checklist

Braun and Clarke (2006) published a 15-point checklist of criteria for good thematic analysis, reproduced and adapted widely in the literature. This checklist evaluates both the analytic process and the written report. The criteria are organised under three stages:

The Transcription Process

- The data have been transcribed to an appropriate level of detail and the transcripts have been checked against the audio/recordings for accuracy.

The Coding Process

- Each data item has been given equal attention in the coding process.

- Themes have not been generated from a few vivid examples — the entire dataset has been analysed.

- All relevant extracts for each theme have been collated.

- Themes have been checked against each other and against the data set.

- The themes are internally coherent, consistent, and distinctive.

The Analysis

- Data have been analysed — interpreted, made sense of — rather than just summarised or described.

- Analysis and data match each other — the extracts illustrate the analytic claims.

- Analysis tells a convincing and well-organised story about the data and topic.

- A good balance between analytic narrative and illustrative extracts is provided.

Overall

- The language and concepts used in the analysis reflect the epistemological position of the research.

- The researcher is positioned clearly in relation to the research — reflexivity is demonstrated.

- Participant confidentiality has been protected.

- The data are not cherry-picked — contrary or complicating examples are addressed.

- Whether the research makes a genuine contribution — does it tell us something new?

In their 2019 and 2022 publications, Braun and Clarke updated their quality framing to emphasise knowing researcher criteria — particularly the importance of researchers understanding why they are making each analytical decision, not just following a procedure mechanically.

Common Mistakes Examiners Flag

Drawing from published examiner feedback, viva reports, and Braun and Clarke’s own published guidance on common problems (2022), these are the errors most likely to trigger examiner criticism:

1. Treating Phases as a Linear Checklist

Examiners can tell when a student has treated the six phases as a rigid sequence to be completed and signed off. Reflexive TA is iterative — you are expected to have returned to earlier phases as your understanding developed. If your methods chapter implies you moved straight from Phase 2 to Phase 6 without revision, examiners will probe this.

2. Conflating Themes with Topics

A theme is not simply a subject area that appeared in the data. “Communication” is a topic. “The burden of translating distress into medically legible language” is a theme. Examiners will ask what your themes contribute analytically — be prepared to answer.

3. Letting Quotes Speak for Themselves

Quotations are evidence, not analysis. A findings chapter that presents a series of quotations with minimal interpretive commentary is a common and serious weakness. Every quote must be introduced, contextualised, and followed by an explanation of what it demonstrates.

4. Claiming IRR as a Quality Marker for Reflexive TA

As noted in the approaches table above, calculating inter-rater reliability is epistemologically inconsistent with reflexive TA. Stating in a methodology chapter that “two coders independently coded the data and achieved 85% agreement” implies a positivist coding reliability approach that contradicts the reflexive TA claim. Examiners familiar with Braun and Clarke’s post-2019 work will identify this immediately.

5. Insufficient Reflexivity in the Methods Chapter

Braun and Clarke’s reflexive approach explicitly requires researchers to acknowledge and analyse their own positionality — their prior knowledge, experiences, values, and theoretical commitments that shaped the analysis. A methods chapter that says only “I am a researcher in this field” without substantive engagement with how your position shaped your interpretations will be marked down.

6. Themes That Are Too Broad or Too Narrow

A theme covering virtually everything in the dataset (“participants had complex experiences”) lacks analytic specificity. A theme covering one participant’s single comment lacks breadth. Aim for 3–6 main themes that each cover a meaningful portion of the dataset while remaining analytically distinct.

7. Misrepresenting the Method as Grounded Theory

Some students describe their approach as “grounded theory informed thematic analysis” — a hybrid that typically reflects unfamiliarity with both methods rather than genuine methodological pluralism. If you are not conducting theoretical sampling, constant comparative analysis, or building a substantive theory, you are not doing grounded theory. Name your method accurately.

8. Frequency as Justification for Themes

Justifying a theme by stating “this was mentioned by 10 out of 12 participants” imports a quantitative logic incompatible with reflexive TA. Frequency can be context (mentioned in passing as background to other points), but analytical weight — not count — is what qualifies something as a theme.

For structured support in writing up your methodology and findings, Tesify’s AI thesis assistant helps you structure your qualitative methods chapter, format your findings sections, and ensure your analysis write-up meets academic standards.

Frequently Asked Questions

How many themes should a thematic analysis have?

Braun and Clarke do not specify a required number, but 3–6 main themes is the most common range in published reflexive TA. Too few themes (1–2) may suggest insufficient analytic depth; too many (8+) usually indicates that codes have been elevated to theme status rather than genuinely synthesised. Sub-themes can be used to capture important variation within a main theme without inflating your theme count. The right number depends on your data, your research question, and what genuinely constitutes a meaningful pattern across the dataset.

What is the difference between a code and a theme in thematic analysis?

A code is a short label assigned to a specific extract of data — it is granular and data-close (e.g., “fear of GP judgment”). A theme is a higher-order pattern constructed by grouping multiple codes that share a conceptual thread (e.g., “The GP as threatening gatekeeper”). Codes are the building blocks of themes; themes are the interpretive constructions that emerge from clustering and abstracting codes. Themes carry analytic weight — they make a claim about the data — whereas codes are descriptive tools for organising it.

Is thematic analysis suitable for a dissertation?

Yes — thematic analysis is one of the most widely used and examiner-familiar qualitative methods for undergraduate and postgraduate dissertations. Its flexibility means it can be applied across disciplines (psychology, education, health sciences, social work, management) and across data types (interviews, focus groups, open-ended surveys, documents). Its six-phase framework provides a clear structure that is easy to write up in a methodology chapter. For most qualitative dissertation studies, reflexive TA is a defensible and appropriate choice, provided the researcher engages with it rigorously rather than treating it as a shortcut.

How many participants do you need for thematic analysis?

There is no universally mandated sample size for reflexive TA. Braun and Clarke have suggested that for an in-depth interview study, a sample of 6–10 participants is often sufficient for a focused dissertation topic with a rich dataset, while larger studies might include 20–30. The key concept is not statistical saturation (a grounded theory term) but data richness — whether the dataset provides sufficient depth to generate analytically meaningful themes. A poorly conducted study with 30 participants will yield thinner analysis than a deeply engaged study with 12. Sample size should be justified by reference to your research question, data type, and the depth of engagement your approach requires.

Can thematic analysis be used with survey data?

Yes, reflexive TA can be applied to open-ended survey responses, though with important caveats. Survey responses tend to be shorter and less interactive than interview data, which means the depth of context available for each response is limited. This affects the richness of coding and the level of latent analysis that is feasible. Braun and Clarke recommend that researchers using survey data acknowledge this limitation explicitly and calibrate their analytic ambitions accordingly — semantic-level themes are often more appropriate than deep latent interpretation for survey-derived data.

What is reflexive thematic analysis and how does it differ from the 2006 approach?

Reflexive thematic analysis (Braun & Clarke, 2019) is the current, updated version of Braun and Clarke’s approach. The key changes from 2006 are: (1) explicit rejection of inter-rater reliability as a quality marker; (2) reframing researcher subjectivity from a bias to be managed into a resource to be leveraged; (3) stronger emphasis on positionality and reflexive journalling; and (4) the renaming of the approach to distinguish it from codebook and coding reliability variants. The six phases themselves remain the same, but the epistemological framing has shifted from a broadly compatible-with-positivism approach to a firmly constructivist one. Researchers should cite both the 2006 paper (for the original framework) and the 2019 paper (for the reflexive evolution) in their methodology chapters.

How do I write up thematic analysis in a dissertation findings chapter?

Structure your findings chapter with a brief introduction presenting your thematic map or table of themes, followed by one section per main theme. Each theme section should: (1) open with a clear analytic claim about what the theme captures; (2) present 2–4 illustrative quotations with participant identifiers (e.g., P4, Interview 7); (3) provide interpretive commentary after each quote explaining what it demonstrates; (4) close by relating the theme to your research question and (where appropriate) existing literature. Avoid summarising what participants said without interpretation, and avoid letting quotes stand alone. The balance of analytic narrative to quotation should be roughly 60:40. See your dissertation methodology chapter guide for how to write up the analytic approach itself.

Should I use inductive or deductive thematic analysis?

The choice depends on your research question and epistemological position. Inductive TA is data-driven — you approach the data without a predetermined analytical framework and allow themes to emerge from close reading. This is appropriate when you are exploring a relatively under-researched phenomenon or when you want your findings to be led by participant perspectives rather than pre-existing theory. Deductive (or theoretically informed) TA begins with a theoretical lens that guides what you look for in the data — appropriate when you are testing or extending an existing theoretical framework. Mixed approaches are also legitimate: you might begin inductively and then use theory to deepen the interpretation at Phase 5. Whichever you choose, your methodology chapter must clearly state and justify your orientation.

Writing up your thematic analysis?

Tesify’s AI thesis assistant helps you structure your qualitative findings chapter, write your reflexive methodology section, and format your analysis to academic standards — saving hours of revision.

Leave a Reply