Systematic Literature Review: How to Reduce Bias in 8 Weeks

Most bias in systematic literature reviews doesn’t creep in during analysis. It’s already baked in by week two — when researchers are still writing their search strings. That’s the uncomfortable truth most methodology textbooks bury in a footnote.

If you’ve ever submitted an SLR and wondered why a reviewer flagged “potential selection bias” or “unclear eligibility criteria,” you’re not alone. A 2021 meta-epidemiological study published in PLOS ONE found that over 46% of published systematic reviews had at least one major methodological flaw linked to bias — and the majority were preventable with structured planning (Ioannidis et al., 2021).

This guide gives you a concrete, week-by-week framework rooted in research methodology and citation standards that actually works — whether you’re a PhD candidate running your first SLR or a professor refining your fifteenth.

What Is Bias in a Systematic Literature Review?

Here’s what makes bias so insidious in SLRs: unlike in primary research, you’re working with evidence someone else produced. You inherit their biases before you even begin your own. Then you add a layer of your own through your search strategy, inclusion decisions, and interpretation.

The Cochrane Collaboration — arguably the gold standard for evidence synthesis — defines systematic review bias across multiple domains, each requiring a different mitigation strategy. Understanding which type you’re fighting is the first step.

What most people miss is that bias isn’t always about dishonesty or carelessness. Cognitive biases — confirmation bias, in particular — operate unconsciously. A researcher who genuinely believes a treatment works will, without structural safeguards, tend to find studies that confirm that belief. The methodology is the safeguard.

The 6 Types of Bias That Destroy SLR Credibility

Reviewers and examiners know these categories. Your SLR should demonstrate that you know them too.

| Bias Type | Primary Source | Typical Impact | Key Mitigation Strategy |

|---|---|---|---|

| Publication Bias | Journals preferring positive results | Overestimates intervention effects | Grey literature search; funnel plot asymmetry test |

| Selection Bias | Non-random or undocumented screening | Skewed evidence pool | Pre-registered eligibility criteria; dual screening |

| Extraction Bias | Inconsistent data coding | Errors in pooled analysis | Standardised extraction forms; Cohen’s kappa checks |

| Reporting Bias | Selective outcome reporting in studies | Inflated effect sizes | Compare against registered protocols (ClinicalTrials.gov) |

| Language Bias | Restricting to English-language sources | Under-representation of global evidence | Multi-language searches; translated abstract screening |

| Citation Bias | Over-citing confirming studies | Distorted narrative synthesis | Systematic citation management; PRISMA flow diagram |

Publication bias deserves special attention. A landmark analysis by Dwan et al. (2013) in PLOS ONE examined 283 meta-analyses and found that trials with statistically significant results were 2.4 times more likely to be published than those without. That number should stop every researcher in their tracks.

The practical consequence? If your SLR only searches published databases, you’re working with a biased sample by definition. Grey literature — dissertations, conference abstracts, government reports, institutional repositories — isn’t optional. It’s methodologically necessary.

The 8-Week Bias-Reduction Framework for Your SLR

An 8-week timeline isn’t arbitrary. It maps directly to the five phases recognised by Cochrane and PRISMA: protocol development, search execution, screening, data extraction, and synthesis/reporting. Spreading these across eight weeks forces deliberate pacing — which is itself a bias-reduction mechanism.

Week 1–2: Protocol Development and Registration

This is where most bias is either prevented or guaranteed. A pre-registered protocol commits you to your research question, eligibility criteria, and analysis plan before you see the data. PROSPERO (International Prospective Register of Systematic Reviews) is the standard registration platform for health and social science SLRs.

- Formulate your PICO question: Population, Intervention, Comparison, Outcome. Vague questions produce biased searches. For non-clinical reviews, adapt to SPIDER (Sample, Phenomenon of Interest, Design, Evaluation, Research type).

- Define eligibility criteria explicitly: Specify inclusion and exclusion criteria before searching. Document the rationale for each criterion.

- Select your databases: A minimum of three databases is standard practice. For most disciplines, this means MEDLINE/PubMed, Web of Science, and Scopus — plus at least one specialist database relevant to your field.

- Register on PROSPERO: Registration takes 2–3 working days for approval. Build this into your timeline. The registration record becomes a permanent public audit trail.

- Establish your citation management system: Zotero, Mendeley, or EndNote are the most widely adopted tools. The Zotero Quick Start Guide is an excellent entry point if you haven’t set up a reference manager yet.

For a broader foundation on research paradigms and design choices that feed into protocol development, the Research Methodology Guide 2026 covers sampling, ethics, and reproducibility considerations that directly inform what belongs in your SLR protocol.

Week 3: Search Strategy Development

Your search string is your most consequential methodological decision. Too narrow, and you introduce selection bias. Too broad, and you drown in irrelevant results while introducing noise.

A peer-reviewed search strategy — reviewed by a medical librarian or information specialist — reduces search bias significantly. A 2016 study in the Journal of the Medical Library Association found that librarian-developed search strategies retrieved 37% more relevant studies than researcher-developed ones alone.

Use Boolean operators systematically: AND narrows, OR broadens. Build your string in blocks matching your PICO components, then combine. Document every term, truncation symbol, and database-specific syntax variation.

Week 4: Database Searching and Grey Literature

Run your searches across all pre-specified databases on the same day where possible — this prevents version drift in records. Export all results to your reference manager immediately and create a deduplicated master file.

Grey literature sources to include: ProQuest Dissertations & Theses, OpenGrey, Google Scholar (first 10 pages), CORE, and relevant governmental or institutional repositories. This is the step that separates a thorough SLR from one that will get flagged for publication bias at peer review.

Week 5: Title/Abstract Screening

Dual independent screening — two reviewers screening independently — is the methodological gold standard for reducing selection bias. Use a screening tool like Covidence, Rayyan, or Nested Knowledge. Calculate inter-rater reliability using Cohen’s kappa (κ ≥ 0.60 is generally considered acceptable; κ ≥ 0.80 is strong).

What researchers underestimate: the pilot screening phase. Screen 50–100 records together before independent screening begins. This calibrates both reviewers to the eligibility criteria and reduces systematic disagreement.

Week 6: Full-Text Screening and Eligibility

Apply your eligibility criteria to full texts. Every exclusion at this stage must be documented with a reason — this feeds directly into your PRISMA flow diagram. Disagreements should be resolved through discussion, not majority vote. If genuine uncertainty persists, a third reviewer arbitrates.

Document all studies excluded at this stage. Reviewers who later request your exclusion list (and they will) deserve a transparent record.

Week 7: Data Extraction and Quality Assessment

Use a standardised data extraction form, piloted on 5–10% of included studies before full extraction. Both reviewers should extract independently for a random sample (minimum 20%) to check extraction reliability.

Risk of bias assessment uses domain-specific tools. For randomised controlled trials: Cochrane RoB 2.0. For observational studies: ROBINS-I or Newcastle-Ottawa Scale. For qualitative studies: CASP (Critical Appraisal Skills Programme) tools.

This is also where you consult the Cochrane Handbook for Systematic Reviews of Interventions — specifically Chapters 7 and 8 on data collection and bias assessment. It’s the definitive reference, not just a recommended read.

Week 8: Synthesis, Reporting, and Bias-of-Bias Assessment

Narrative synthesis or meta-analysis — the choice depends on study heterogeneity. For quantitative pooling, report I² statistics: values above 75% suggest substantial heterogeneity that calls for subgroup analysis, not simple pooling.

Run a funnel plot analysis if you have ≥10 studies for a given outcome — asymmetry suggests publication bias. Egger’s test provides a formal statistical check.

Complete your PRISMA 2020 flow diagram and checklist. This is non-negotiable for submission to any peer-reviewed journal and increasingly required by postgraduate examiners.

PRISMA 2020 and Transparent Reporting Standards

PRISMA 2020 — Preferred Reporting Items for Systematic Reviews and Meta-Analyses — was updated from the 2009 version to address exactly the bias types we’ve discussed. The revision added 12 new items, including requirements for registering your protocol, searching grey literature, and assessing certainty of evidence using GRADE.

The PRISMA 2020 checklist contains 27 items across seven sections: title, abstract, introduction, methods, results, discussion, and other information. Every item maps to a specific bias risk. Working through it methodically isn’t box-ticking — it’s a final bias audit.

Here’s where it gets interesting: the PRISMA 2020 update explicitly addresses automation and machine-learning tools in screening. If you’re using AI-assisted title/abstract screening (an increasingly common practice), PRISMA now requires you to report this and validate its performance against human screening on a subset of records.

For reproducibility in your reporting and across the entire research cycle, the principles outlined in Research Methodology Tips for Reproducibility align directly with PRISMA’s transparency requirements — particularly around data management and pre-registration.

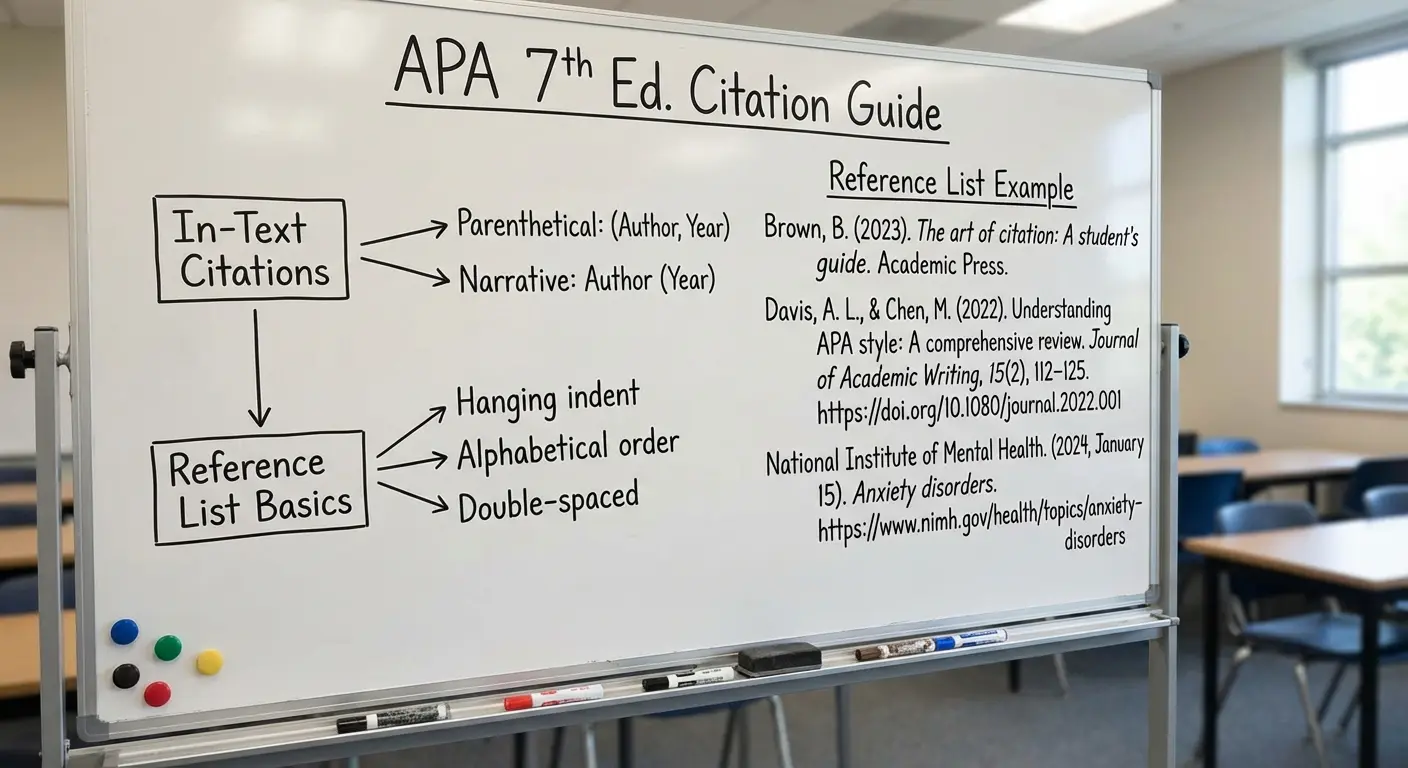

How Citation Standards Reduce Reporting Bias

Citation management isn’t a clerical task. It’s a methodological one — and conflating the two is a mistake that introduces subtle reporting bias into otherwise rigorous SLRs.

Consider this: when you cite a study, you’re making an epistemological claim about what counts as evidence in your field. Inconsistent citation practices — pulling quotes out of context, citing abstracts instead of full texts, failing to distinguish between primary and secondary sources — create a distorted picture of the evidence base.

APA 7th edition, the dominant standard in psychology, education, and health sciences, introduced specific guidance on citing systematic reviews, grey literature, and data sets. MLA 9th edition reformed its container-based citation model to accommodate the same. For those working in history or the humanities, Chicago 17th edition’s footnote system remains the standard — but it equally requires source transparency.

The practical bias-reduction rule: always cite the primary source. Secondary citation (citing a study via another review that cited it) is a direct path to citation bias — you’re trusting someone else’s reading of a study you haven’t read yourself.

The Research Methodology: Standardize Citations 2025 guide covers APA, MLA, Chicago, and Harvard standardisation in detail — including the most common pitfalls that introduce ambiguity into reference lists and erode the transparency that peer reviewers expect.

External validation from the Purdue OWL APA Style Guide remains the most widely consulted free reference for citation formatting in English-speaking academic institutions.

Research Databases and Tools for a Bias-Aware SLR

Your tool choices shape your bias exposure. Here’s an honest assessment of the main options.

| Tool/Database | Best For | Bias Risk if Excluded | Free Access? |

|---|---|---|---|

| PubMed/MEDLINE | Health sciences, biomedical | High (largest biomedical index) | Yes |

| Web of Science | Cross-disciplinary, citation analysis | Moderate-High | Institutional only |

| Scopus | STEM and social sciences | Moderate-High | Institutional only |

| JSTOR | Humanities, social sciences, archives | Moderate (historical literature) | Partial free access |

| Google Scholar | Grey literature, preprints, broad coverage | High for grey literature gaps | Yes |

| Covidence | Dual screening management | N/A (process tool) | Free trial; paid |

| Rayyan | Title/abstract screening collaboration | N/A (process tool) | Free |

A note on Google Scholar: it’s not a substitute for structured database searching. Its lack of a formal search history export and its algorithm-driven (rather than structured index) nature mean it can’t replace PubMed or Web of Science. But for grey literature and preprint coverage — particularly important post-2020 — it’s irreplaceable as a supplementary source.

The Wellcome Trust’s report on research culture found that 83% of researchers felt pressure to publish in prestigious journals — which directly feeds publication bias. Understanding the systemic pressures that create bias helps you account for them structurally, rather than assuming the literature is neutral.

Bias-Reduction Checklist for Your 8-Week SLR

Fair warning: this takes genuine effort. But working through each item once protects you from reviewer comments that could delay publication for months.

Protocol Phase (Weeks 1–2)

- ☐ PICO/SPIDER question documented in writing

- ☐ Eligibility criteria defined before any searching

- ☐ Protocol registered on PROSPERO or equivalent

- ☐ Minimum three databases pre-specified

- ☐ Grey literature sources identified and listed

- ☐ Citation manager configured; deduplication workflow established

Search Phase (Weeks 3–4)

- ☐ Search strings peer-reviewed (ideally by information specialist)

- ☐ Searches run on same date across all databases

- ☐ Search history exported and saved (with date stamps)

- ☐ Grey literature searched: ProQuest, OpenGrey, Google Scholar, institutional repositories

- ☐ Reference lists of included studies hand-searched

Screening Phase (Weeks 5–6)

- ☐ Pilot screening completed (50+ records, both reviewers)

- ☐ Cohen’s kappa calculated (target ≥ 0.60)

- ☐ All exclusions at full-text stage documented with reasons

- ☐ PRISMA flow diagram drafted from screening numbers

Extraction and Synthesis Phase (Weeks 7–8)

- ☐ Standardised extraction form piloted on 5–10% of studies

- ☐ Risk of bias assessed using appropriate tool (RoB 2.0, ROBINS-I, CASP)

- ☐ Funnel plot analysis run (if ≥10 studies per outcome)

- ☐ GRADE certainty of evidence assessed

- ☐ PRISMA 2020 checklist completed (all 27 items)

- ☐ All citations verified against primary sources — no secondary citation

Frequently Asked Questions

What is the most common source of bias in systematic literature reviews?

Publication bias is consistently cited as the most pervasive source — journals historically favour positive results, which means unpublished null or negative findings create a systematically skewed evidence base. Selection bias in the screening phase is the second most common, arising when eligibility criteria are applied inconsistently or retrospectively revised after searching has begun.

Do I need two reviewers for every stage of a systematic literature review?

Dual independent review is mandatory at the title/abstract and full-text screening stages according to Cochrane and PRISMA standards. For data extraction, dual extraction is the ideal but is sometimes pragmatically replaced by single extraction with verification — provided this is transparently reported as a limitation. Solo reviews should always justify the decision and document additional bias safeguards.

Is PRISMA 2020 required for all systematic reviews?

PRISMA 2020 is not legally required, but over 1,000 journals now mandate it as a condition of submission. Failure to follow PRISMA standards is the leading reason peer reviewers request major revisions or reject SLRs outright. Treating PRISMA as optional is a high-risk methodological strategy.

How does citation bias differ from publication bias in a systematic review?

Publication bias refers to the selective publication of studies based on their results — a problem at the primary research level. Citation bias occurs during the review process itself, when reviewers disproportionately cite studies that confirm their hypotheses while overlooking contradictory evidence. Both skew conclusions, but citation bias is under the reviewer’s direct control and is addressed through systematic search strategies and transparent reference management.

Can AI tools introduce new forms of bias into systematic reviews?

Yes — AI-assisted screening tools can introduce algorithmic bias if training data doesn’t represent the full disciplinary literature, or if the tool’s performance isn’t validated against human screening. PRISMA 2020 now requires researchers to report any use of automation in the search or screening phases, including validation methods. AI can reduce human fatigue-related bias while potentially introducing new systematic errors — transparency is the key safeguard.

What is PROSPERO and why should I register my review protocol there?

PROSPERO is the International Prospective Register of Systematic Reviews, hosted by the University of York’s Centre for Reviews and Dissemination. Registering your protocol before searching creates a public, timestamped record of your original research question and methods — preventing post-hoc changes that would constitute reporting bias. Registration also demonstrates methodological rigour to peer reviewers and thesis examiners.

Build Your Research Methodology Foundation

Reducing bias in a systematic literature review is inseparable from the broader discipline of rigorous research methodology and citation standards. These resources extend what you’ve read here into a full framework:

- Research Methodology Guide 2026 — Covers research paradigms, design, sampling, ethics, and reproducibility: the foundational layer beneath every well-designed SLR.

- Standardise Your Citations in 2025 — APA 7th, MLA 9th, Chicago, and Harvard citation standards explained with practical guidance on avoiding reference bias and ensuring source transparency.

- Reproducibility Tips for Researchers — Data management, transparent reporting practices, and study design principles that align with PRISMA’s reproducibility requirements.

If this guide was useful, share it with a colleague running their first SLR — or bookmark it for your research toolkit. The methodology community grows stronger when rigorous, open resources circulate freely.

References

- Dwan, K., Gamble, C., Williamson, P. R., & Kirkham, J. J. (2013). Systematic review of the empirical evidence of study publication bias and outcome reporting bias — an updated review. PLOS ONE, 8(7), e66844. https://doi.org/10.1371/journal.pone.0066844

- Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2022). Cochrane Handbook for Systematic Reviews of Interventions (Version 6.3). Cochrane. https://training.cochrane.org/handbook/current

- Ioannidis, J. P. A., Fanelli, D., Dunne, D. D., & Goodman, S. N. (2021). Meta-research: Why research on research matters. PLOS Biology, 19(3), e3001227.

- Page, M. J., McKenzie, J. E., Bossuyt, P. M., Boutron, I., Hoffmann, T. C., Mulrow, C. D., … Moher, D. (2021). The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. BMJ, 372, n71. https://doi.org/10.1136/bmj.n71

- Sampson, M., McGowan, J., Cogo, E., Grimshaw, J., Moher, D., & Lefebvre, C. (2009). An evidence-based practice guideline for the peer review of electronic search strategies. Journal of Clinical Epidemiology, 62(9), 944–952.

- Wellcome Trust. (2020). What researchers think about the culture they work in. https://wellcome.org/reports/what-researchers-think-about-research-culture

Leave a Reply