Quantitative Research Methods: A Step-by-Step Guide for 2026

When your research question demands numerical evidence — effect sizes, frequencies, relationships, or predictions — quantitative research methods provide the systematic framework for collecting, measuring, and analysing data with the rigour that peer review demands. From psychology and economics to public health and educational research, quantitative methods generate findings that can be statistically tested and, under the right conditions, generalised to broader populations. This 2026 guide takes you through every stage of a quantitative study, from framing a hypothesis to interpreting inferential statistics.

Understanding quantitative research methods is not simply about knowing which statistical test to run. It requires a coherent chain of reasoning from your research question and theoretical framework, through your design decisions and measurement instruments, to your analysis and interpretation. A statistically significant result in a poorly designed study is worth very little. This guide teaches the whole chain.

Foundations of Quantitative Research

Quantitative research operates from a positivist or post-positivist philosophical foundation: it assumes that reality exists independently of the observer, that it can be measured, and that objective knowledge can be generated through rigorous methodology. Its goals are to describe, explain, predict, and in some designs, control phenomena.

The logical structure of most quantitative studies is hypothetico-deductive: you begin with an established theory, derive a specific testable hypothesis, collect data to test it, and draw conclusions. This deductive process contrasts with the inductive, theory-building logic of qualitative approaches. When you need to combine both, see our mixed methods research guide.

Quantitative research is appropriate when:

- Your research question asks “how much,” “how many,” “how often,” or “to what extent.”

- You need to test a causal or correlational hypothesis.

- You want to generalise findings to a defined population.

- Your constructs can be operationalised — translated into measurable variables.

- Replicability is essential (clinical trials, policy evaluation, experimental psychology).

The Four Main Quantitative Research Designs

1. Descriptive Research

Descriptive designs answer “what” questions without manipulating variables. They describe the characteristics of a population or phenomenon at a single point in time (cross-sectional) or over time (longitudinal). Surveys and structured observation are the primary data collection tools. Descriptive research cannot establish causality — it identifies patterns and relationships that might be tested causally in experimental designs.

2. Correlational Research

Correlational designs measure the relationship between two or more variables without manipulating any of them. The researcher measures variables as they naturally occur and calculates a correlation coefficient (ranging from -1 to +1) to express the strength and direction of the association. Key limitation: correlation does not imply causation. Third variables (confounds) may explain any observed correlation.

3. Experimental Research

Experimental designs manipulate at least one independent variable (IV) and measure the effect on a dependent variable (DV), with random assignment of participants to conditions. Random assignment is the feature that allows causal inference — it equates groups on all variables (known and unknown) before the manipulation. True experiments represent the gold standard for establishing causality in most social and medical sciences.

4. Quasi-Experimental Research

Quasi-experiments have an intervention or comparison condition but lack random assignment. They are common in educational and public policy research, where randomisation is impractical or unethical. Designs include pre-test/post-test with a comparison group, regression discontinuity, and interrupted time series. Quasi-experimental findings are more vulnerable to confounding than true experiments but more internally valid than purely correlational designs.

Sampling Methods and Sample Size

Quantitative research aims to generalise from a sample to a population. The quality of this generalisation depends on how the sample was drawn.

Probability Sampling

Every member of the population has a known, non-zero probability of being selected. This supports statistical generalisation. Types:

- Simple random sampling: Each unit randomly selected from the full population list. Most straightforward; requires a complete sampling frame.

- Stratified random sampling: Population divided into subgroups (strata) by a relevant variable (age, gender); random sample drawn from each stratum. Improves representation of minority subgroups.

- Cluster sampling: Population divided into naturally occurring clusters (schools, hospitals); whole clusters randomly selected. Reduces cost but increases sampling error.

- Systematic sampling: Every nth member of the list selected after a random starting point.

Non-Probability Sampling

Used when a complete sampling frame is unavailable or when convenience necessitates it. Types include convenience sampling, purposive sampling, and snowball sampling. Findings cannot be statistically generalised, but non-probability samples are acceptable for pilot studies, student dissertations, and exploratory research.

Determining Sample Size

Sample size should be calculated using a power analysis before data collection begins. Key inputs: the expected effect size (often drawn from prior literature), the desired statistical power (conventionally 0.80), and the significance level (typically α = 0.05). Free tools like G*Power make power analysis accessible to students. An underpowered study risks missing a real effect (Type II error); an overpowered study wastes resources and can detect trivially small effects as “significant.”

Measurement: Variables, Scales, and Instruments

Types of Variables

- Independent variable (IV): The variable the researcher manipulates or uses to predict/explain.

- Dependent variable (DV): The variable measured as the outcome or effect.

- Control variable: A variable held constant or statistically controlled to reduce confounding.

- Moderator: A variable that changes the strength or direction of the IV–DV relationship.

- Mediator: A variable through which the IV affects the DV — the mechanism of effect.

Levels of Measurement

| Level | Properties | Example | Appropriate Stats |

|---|---|---|---|

| Nominal | Categories only | Gender, nationality | Mode, chi-square |

| Ordinal | Ordered categories | Likert scale (Strongly Agree–Disagree) | Median, Mann-Whitney U |

| Interval | Equal intervals, no true zero | Temperature (°C), IQ score | Mean, t-test, ANOVA |

| Ratio | Equal intervals, true zero | Height, income, reaction time | All parametric tests |

The level of measurement determines which statistical tests are appropriate. Using parametric tests (which assume interval/ratio data) on ordinal data is one of the most common methodological errors in student research.

Survey Design and Construction

Surveys are the most widely used quantitative data collection instrument. Well-designed surveys produce clean, analysable data; poorly designed surveys produce noise.

Key principles of good survey design:

- One idea per question. Double-barrelled questions (“Do you find the course useful and well-organised?”) make responses uninterpretable.

- Avoid leading questions. “Don’t you think the policy is harmful?” is not neutral.

- Likert scales should be symmetric. Five or seven response options, with a neutral midpoint, are standard. Use the same scale orientation consistently.

- Validated instruments are preferable. Where established, psychometrically validated questionnaires exist (e.g., PHQ-9 for depression, Maslach Burnout Inventory), use them rather than creating your own.

- Pilot test before full deployment. A small pilot (10–20 participants) identifies ambiguous questions, technical issues, and response time estimates.

- Minimise social desirability bias. For sensitive topics, use anonymous surveys and validated indirect question formats.

Experimental Design

A well-designed experiment controls sources of bias at every stage. Key design features:

- Randomisation: Participants randomly assigned to conditions. This is the cornerstone of causal inference.

- Control condition: A comparison group receiving no treatment, a placebo, or standard care.

- Blinding: Single-blind (participants unaware of condition) or double-blind (both participants and assessors unaware) designs reduce expectancy effects.

- Manipulation check: Verify that the IV actually produced the intended difference between conditions (e.g., a stress induction procedure should produce higher cortisol in the experimental group).

- Pre-registration: Register your hypothesis, design, and analysis plan in a public repository (OSF.io) before data collection. This is increasingly required by journals and reduces the risk of p-hacking.

Statistical Analysis: From Descriptive to Inferential

Descriptive Statistics

Always report descriptive statistics before inferential analysis: means (or medians for non-normal data), standard deviations, ranges, and frequency distributions. These give a clear picture of what the data look like and help identify outliers, data entry errors, and violations of statistical assumptions.

Inferential Statistics: Choosing the Right Test

| Scenario | Appropriate Test |

|---|---|

| Compare two independent groups on a continuous DV | Independent-samples t-test |

| Compare one group before and after an intervention | Paired-samples t-test |

| Compare three or more groups | One-way ANOVA |

| Examine relationship between two continuous variables | Pearson’s correlation |

| Predict a DV from one or more predictors | Linear regression (multiple regression) |

| Association between two categorical variables | Chi-square test of independence |

| Binary outcome variable (yes/no) | Binary logistic regression |

Effect Sizes and Confidence Intervals

A p-value tells you whether a result is unlikely under the null hypothesis; it does not tell you how large or practically important the effect is. Always report effect sizes (Cohen’s d for t-tests, η² for ANOVA, r for correlations) alongside p-values. Report 95% confidence intervals wherever possible. This practice aligns with the APA’s (and most major journals’) reporting standards.

Validity and Reliability

- Internal validity: Does the study measure what it intends to measure? Threatened by confounds, demand characteristics, and attrition.

- External validity: Do the findings generalise beyond the study sample and setting? Threatened by non-representative samples and artificial lab conditions.

- Construct validity: Does your measurement instrument accurately capture the theoretical construct? Assessed through convergent and discriminant validity evidence.

- Reliability: Does the instrument produce consistent results across time (test-retest), across raters (inter-rater), and across items measuring the same construct (internal consistency — Cronbach’s α ≥ .70 is the conventional threshold)?

Reporting Quantitative Findings

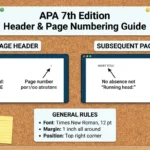

Quantitative results sections should be structured, precise, and complete. The APA Publication Manual provides the reporting standards that most journals follow. Key conventions:

- Report all test statistics in the format: t(df) = value, p = value, d = value (e.g., t(58) = 3.24, p = .002, d = 0.82).

- Spell out statistics in the text but abbreviate in parentheses (Mean vs. M).

- Use tables for complex results involving multiple variables.

- Use figures (bar charts, scatter plots) to display distributions and relationships.

- Always report exact p-values (p = .034) rather than inequalities (p < .05), except when p < .001.

For help structuring your methodology chapter, Tesify Write provides templates aligned with APA and other reporting standards. Before submission, run your dissertation through the Tesify Plagiarism Checker to ensure your methods descriptions are properly paraphrased.

Frequently Asked Questions

What is the difference between quantitative and qualitative research?

Quantitative research collects numerical data, tests hypotheses, and uses statistical analysis to seek generalisable results. Qualitative research collects non-numerical data (words, observations) and explores meaning, process, and context in depth. The choice depends on the research question: “how many/much/often” calls for quantitative methods; “how/why/what does it mean” calls for qualitative methods.

How do I know if my sample size is large enough?

Run a power analysis before data collection using a tool like G*Power (free). You need three inputs: an expected effect size (from the literature), a significance level (conventionally α = 0.05), and a desired power level (conventionally 0.80). The tool calculates the minimum sample size required. Never collect data and then determine adequacy post-hoc — this inflates Type I error rates.

What is the difference between a t-test and ANOVA?

A t-test compares the means of two groups. ANOVA (Analysis of Variance) compares the means of three or more groups. Running multiple t-tests on more than two groups inflates the Type I error rate (familywise error). ANOVA controls this by testing all groups simultaneously. When ANOVA is significant, post-hoc tests (Tukey’s HSD, Bonferroni) identify which specific pairs of groups differ.

What is a p-value and what does p < 0.05 mean?

A p-value is the probability of obtaining results at least as extreme as those observed, assuming the null hypothesis is true. A p-value below the significance threshold (typically 0.05) means the result is unlikely to have occurred by chance alone, leading to rejection of the null hypothesis. Crucially, p < 0.05 does not mean the null hypothesis is false, nor does it measure the size or importance of the effect. Always pair p-values with effect sizes.

What is Cronbach’s alpha and why does it matter?

Cronbach’s alpha (α) measures the internal consistency reliability of a multi-item scale — how well the items in a questionnaire collectively measure the same construct. Values range from 0 to 1; α ≥ .70 is the conventional minimum for social science research, and α ≥ .80 is preferred. If α is low, some items may be measuring a different construct than intended, or the scale may need more items.

Write Your Methods Chapter with Confidence

A well-executed quantitative methodology is the backbone of a credible dissertation. For complementary methodology guides, see our qualitative research methods guide and our mixed methods research overview. Use Tesify Write to draft and refine your methodology chapter, and explore our research proposal template for guidance on presenting your design before data collection begins. For Spanish-speaking students, tesify.es offers the same academic writing support in Spanish.

Leave a Reply