Research Methodology: 5 Proven Tips for Reproducible Results

Research methodology determines whether your findings stand up to scrutiny — or quietly collapse the moment someone tries to replicate them. The reproducibility crisis has shaken fields from psychology to biomedicine: a landmark 2015 replication effort found that only 39% of psychological studies reproduced their original results (Open Science Collaboration, 2015). That’s not a marginal problem. That’s a structural one, and it almost always traces back to methodological decisions made early in a study’s design.

What separates a publishable, credible study from one that stalls in peer review? Rigorous, transparent research methodology — the kind that lets a stranger in another country reconstruct your process and arrive at the same conclusion. This article breaks down five evidence-based strategies that researchers, PhD candidates, and faculty can apply immediately to produce reproducible, high-integrity findings.

What Is Research Methodology? A Working Definition

That definition sounds dense, so here’s the practical version: research methodology is your study’s operating manual. It tells you — and everyone else — exactly what you did, why you did it that way, and how confident you can be in the results.

The distinction between methodology and methods trips up even experienced researchers. Methods are the specific tools you use (a survey, an fMRI scanner, a semi-structured interview guide). Methodology is the intellectual architecture that justifies why those tools are appropriate for your research question. Gary King’s foundational work at Harvard’s Gov 2001 course articulates this distinction particularly well — his framework for quantitative social science methods has shaped how a generation of researchers think about inference and causal identification.

Here’s where it gets interesting: most reproducibility failures aren’t caused by fraud. They’re caused by methodological decisions that seemed reasonable at the time — flexible stopping rules, underpowered samples, ambiguous operationalisations of constructs — that quietly compound into findings that don’t hold up. The five tips below target precisely those decision points.

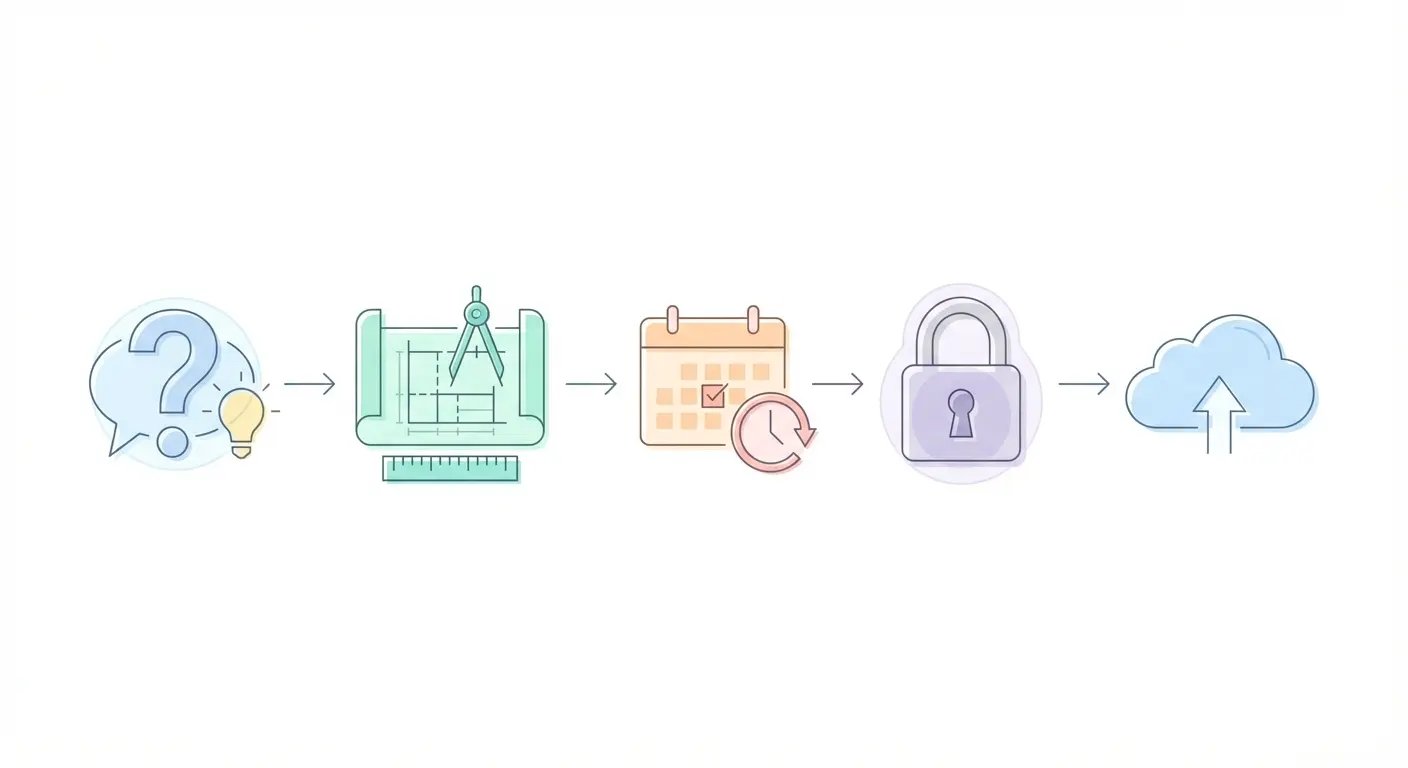

Tip 1 — Pre-Register Your Study Design Before Data Collection

Pre-registration is the single highest-leverage change you can make to your research methodology, and it costs nothing but planning time.

The logic is straightforward. When you commit your hypotheses, sample size, primary outcome measures, and analytic plan to a time-stamped public record before collecting any data, you eliminate the possibility of hypothesising after results are known (HARKing) and reduce the incentive for selective outcome reporting. Both practices inflate false-positive rates substantially — Simmons et al. (2011) demonstrated that flexible analytic choices can push false-positive rates from a nominal 5% to over 60%.

The Open Science Framework (OSF), maintained by the Center for Open Science, provides free pre-registration infrastructure used by researchers across disciplines. You can learn the basics through their OSF 101 orientation materials — the setup process takes under an hour for a straightforward study.

What to Include in a Pre-Registration

- Research question and hypotheses — stated in falsifiable, directional terms where possible

- Study design — experimental, quasi-experimental, observational, longitudinal; randomisation procedure if applicable

- Participants or data sources — inclusion/exclusion criteria, recruitment strategy, target sample size with power justification

- Measures and instruments — exact versions, scales, coding schemes, with citations

- Primary and secondary outcomes — specify which outcomes are confirmatory versus exploratory

- Analytic strategy — statistical tests, software, handling of missing data, multiple comparison corrections

- Deviations policy — how you’ll report any departures from the pre-registered plan

One counterintuitive point worth flagging: pre-registration doesn’t constrain exploratory analysis. It simply requires you to label it honestly. Post-hoc exploratory findings are scientifically valuable — they just need to be presented as hypothesis-generating rather than hypothesis-confirming. That transparency is what makes your research methodology credible.

The NIH’s Rigor and Reproducibility guidance now effectively mandates pre-registration thinking for funded biomedical research, requiring applicants to address biological variables, authentication of key resources, and rigorous experimental design in grant applications.

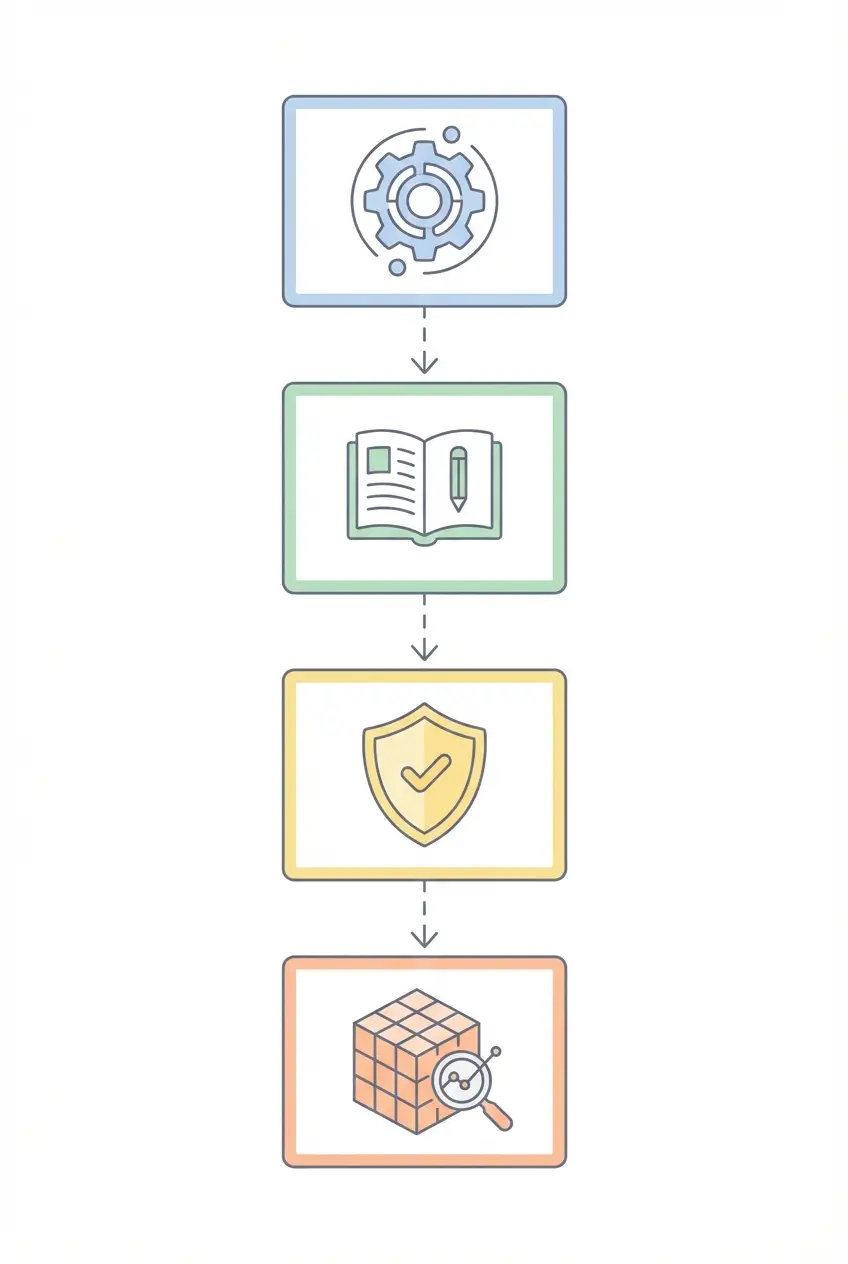

Tip 2 — Write a Protocol So Detailed a Stranger Could Replicate It

Ask yourself honestly: if a competent researcher in a different institution read your methods section, could they reproduce your study without emailing you a single question? For most published studies, the answer is no — and that’s a methodological problem, not a writing problem.

Protocol completeness is the backbone of reproducible research methodology. A survey published in Nature found that 70% of researchers had tried and failed to reproduce another scientist’s experiment, with incomplete methods cited as a primary barrier (Baker, 2016). The solution isn’t writing longer methods sections — it’s writing more precise ones.

Elements of a Complete Research Protocol

Procedural specificity: Don’t write “participants completed a questionnaire.” Write “participants completed the 21-item Beck Depression Inventory-II (BDI-II; Beck et al., 1996) on paper, in a quiet room, without time constraints, after a 10-minute acclimatisation period.” Every detail that could plausibly affect results deserves a sentence.

Instrument versions: Software updates, scale revisions, and apparatus specifications change results. Record the exact version of every instrument — including the statistical software package and its version number. “SPSS” is not sufficient. “IBM SPSS Statistics Version 29.0” is.

Decision rules: Document what happens when things go wrong. How will you handle a participant who withdraws mid-session? What’s your threshold for excluding an outlier? These decisions made in advance and documented in the protocol are what distinguishes rigorous research methodology from post-hoc rationalisation.

Data dictionary: Every variable in your dataset needs a name, definition, unit of measurement, possible range, and coding scheme. This is tedious. It’s also what makes your dataset reusable five years from now when someone wants to build on your work.

For PhD candidates especially, protocol documentation intersects directly with demonstrating the originality of your contribution. The strategies discussed in our guide on proving originality in doctoral dissertations show how transparent methodological documentation strengthens the case that your findings represent a genuine scholarly contribution — not just a repackaging of prior work.

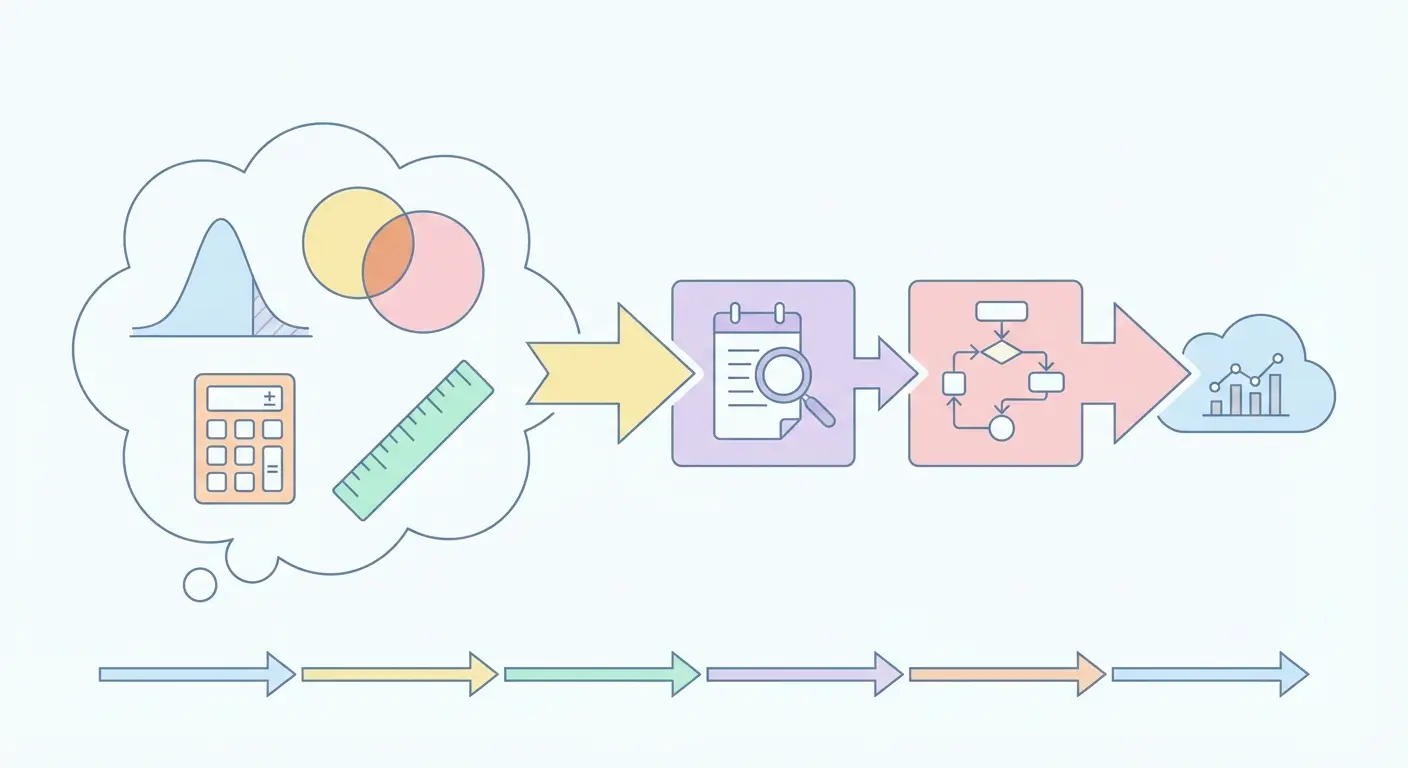

Tip 3 — Calculate Statistical Power and Sample Size Before You Begin

Underpowered studies are one of the least-discussed drivers of the reproducibility crisis — and one of the most fixable. A study with insufficient sample size has a high probability of missing a true effect (low power) and, paradoxically, a high probability that any statistically significant result it does find reflects noise rather than signal (Button et al., 2013).

The standard convention, following Cohen (1988), is to design for 80% power — meaning an 80% probability of detecting a true effect of the specified size at a given significance threshold. In practice, many published studies fall well below this, particularly in psychology and biomedicine. A meta-analysis by Szucs and Ioannidis (2017) estimated median statistical power in neuroscience at approximately 8–31%, depending on the assumed effect size.

How to Conduct an A Priori Power Analysis

- Specify your effect size — use prior literature, meta-analyses, or pilot data. Cohen’s d for mean differences, r for correlations, f² for regression. Resist the temptation to assume large effects; the literature consistently overestimates them.

- Set your alpha level — conventionally 0.05, though fields vary. Pre-register this decision.

- Choose your target power — 0.80 is conventional; 0.90 or 0.95 is defensible for high-stakes research.

- Run the calculation — G*Power, developed at Heinrich-Heine University Düsseldorf, is free, peer-reviewed, and handles over 50 statistical tests. It’s the standard tool in psychology and increasingly in other fields.

- Build in attrition — for longitudinal studies or clinical trials, inflate your target N by an estimated dropout rate. Reaching 80% power with your planned sample, then losing 30% of participants to attrition, leaves you substantially underpowered.

- Report the calculation fully — include effect size, alpha, power, and software version in your methods section. Reviewers and replicators need this information.

What most researchers miss is that effect size specification is itself a methodological decision with major downstream consequences. Using a “medium” effect size (d = 0.50) as a default — common in psychology — produces dramatically different sample requirements than using an effect size derived from a rigorous meta-analysis of closely related studies. The effort invested in a proper literature review to anchor your power analysis pays dividends in credibility.

Planning and structuring your research workflow — including power analysis timelines — is considerably more tractable with systematic tools. Our resource on planning and structuring dissertations with intelligent tools covers how to organise the protocol, version control, and timeline elements that make a study reproducible from the outset.

Tip 4 — Manage Data Provenance With Rigorous Version Control

Data provenance — the documented history of where your data came from, how it was processed, and what transformations were applied — is the connective tissue of reproducible research. Without it, even a well-designed study becomes irreproducible the moment the lead researcher leaves the institution.

The problem is more common than it sounds. Research in Scientific Data found that over 80% of datasets associated with published papers were inaccessible within five years of publication, either because links had rotted, file formats were obsolete, or the data simply hadn’t been shared (Tedersoo et al., 2021). FAIR data principles — Findable, Accessible, Interoperable, Reusable — were developed specifically to address this (Wilkinson et al., 2016, Scientific Data).

Practical Data Provenance Standards

Raw data preservation: Never overwrite raw data files. Store them in a read-only format and directory from day one. All transformations happen on copies, with transformation scripts retained and dated.

Version control for analysis scripts: Git-based version control (via GitHub or GitLab) is standard in computational research. Each commit message should describe what changed and why. This creates an auditable record that goes far beyond what any methods section can convey.

Repository deposit: Deposit data and analysis code in a domain-appropriate repository — OSF for social and behavioural sciences, Zenodo or Figshare for cross-disciplinary work, UKDA for UK social science data, ICPSR for political and social research. Include a README that explains the directory structure, variable dictionary, and software requirements.

Citation of data sources: Every secondary dataset used in your research needs a formal citation with version number, access date, and persistent identifier (DOI or handle). This is increasingly enforced by journals and mirrors the citation standards applied to literature sources. For researchers managing complex citation workflows, the discussion of automated citation tools for academic work covers reference management practices that support traceable, reproducible citation records.

Codebook and metadata: Every dataset should be accompanied by a codebook specifying variable names, labels, value codes, units, and any data collection notes. Think of it as the data dictionary made public and permanent.

Tip 5 — Report Against Established Methodological Reporting Standards

The final barrier to reproducibility is reporting — specifically, the chronic underreporting of methodological detail in published articles. Researchers know what they did; they often assume readers can infer what wasn’t explicitly stated. That assumption is wrong, and reporting guidelines exist precisely to correct it.

The EQUATOR Network (Enhancing the Quality and Transparency of Health Research) maintains the largest repository of evidence-based reporting guidelines — over 500 at last count. The most widely used include:

Core Reporting Guidelines by Study Design

| Guideline | Study Design | Key Focus Areas | Adopting Journals |

|---|---|---|---|

| CONSORT | Randomised controlled trials | Randomisation, blinding, allocation concealment, CONSORT flowchart | JAMA, Lancet, BMJ, NEJM |

| STROBE | Observational studies (cohort, case-control, cross-sectional) | Selection bias, confounding, measurement, statistical methods | Epidemiology, PLOS Medicine |

| PRISMA | Systematic reviews and meta-analyses | Search strategy, inclusion criteria, PRISMA flowchart, risk of bias | Cochrane, BMJ, Systematic Reviews |

| COREQ | Qualitative research | Researcher characteristics, study design, data analysis | Qualitative Health Research, Social Science & Medicine |

| ARRIVE | Animal research | Housing, husbandry, experimental design, statistical methods | PLoS Biology, Nature Methods |

Using a reporting guideline doesn’t mean mechanically ticking boxes. It means systematically working through every item and either reporting it or explicitly stating that it doesn’t apply and why. That deliberate engagement with a structured reporting framework is itself a signal of methodological maturity that peer reviewers — and journal editors — notice.

The Crash Course Sociology series offers an accessible illustration of how sociological research methods map onto these reporting principles — their Research Methods overview (Crash Course Sociology #4) is a useful primer for researchers new to social science methodology.

Research Methodology Approaches: A Comparative Overview

Choosing between quantitative, qualitative, and mixed methods isn’t a philosophical preference — it’s a research design decision driven by your question, your epistemological position, and what kind of claims you need to make. Here’s how the primary approaches compare across the dimensions that matter most for reproducibility.

| Dimension | Quantitative | Qualitative | Mixed Methods |

|---|---|---|---|

| Epistemological stance | Post-positivist; objective reality measurable with error | Constructivist/interpretivist; reality socially constructed | Pragmatist; best method for the question |

| Reproducibility standard | Exact replication with same results (within sampling error) | Transferability; similar contexts yield comparable themes | Component-specific; quantitative arm replicable, qualitative arm transferable |

| Pre-registration suitability | High — hypotheses and analytic plan fully specifiable | Moderate — design and analysis approach pre-specifiable, not hypotheses | High for quantitative strand; moderate for qualitative strand |

| Key reporting guideline | CONSORT, STROBE, PRISMA | COREQ, SRQR, ENTREQ | MMAT (Mixed Methods Appraisal Tool) |

| Primary validity threat | Statistical conclusion validity; measurement error | Researcher bias; reflexivity | Integration failure; component mismatch |

| Data sharing norm | High — anonymised datasets typically shareable | Low to moderate — confidentiality constraints common | Mixed — quantitative data shareable; qualitative often restricted |

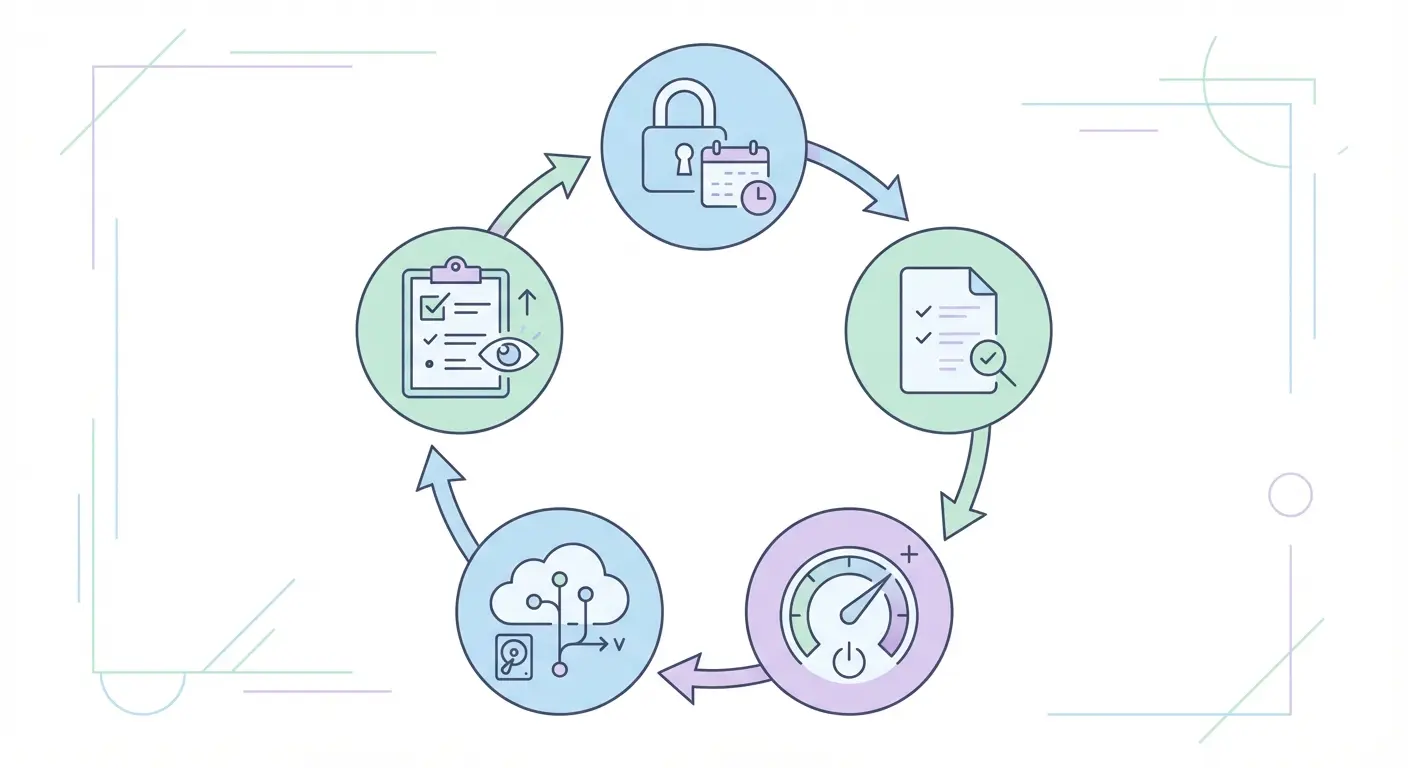

Practical Checklist for Reproducible Research Methodology

A checklist won’t replace methodological judgement — but it will stop you forgetting things at 11pm the night before submission. Fair warning: this takes genuine effort to complete properly. The items that feel tedious are usually the ones that matter most for replication.

Pre-Study Phase

- ☐ Research question specified in precise, answerable terms

- ☐ Hypotheses stated in directional, falsifiable form (quantitative) or analytic approach documented (qualitative)

- ☐ Study pre-registered on OSF, AsPredicted, or discipline-specific registry

- ☐ A priori power analysis conducted with effect size justification; target N determined

- ☐ Attrition estimated and sample size inflated accordingly

- ☐ All instruments identified with exact version numbers and citations

- ☐ Data dictionary drafted before data collection begins

- ☐ Data storage and sharing plan confirmed (FAIR principles)

- ☐ Ethics approval obtained and reference number documented

- ☐ Informed consent procedure documented in protocol

Data Collection Phase

- ☐ Raw data stored in read-only format immediately upon collection

- ☐ Any deviations from protocol documented in real time with date and rationale

- ☐ Inter-rater reliability assessed and documented if coding is involved

- ☐ Data entry or cleaning scripts saved with version numbers

- ☐ Blinding maintained where planned

Analysis Phase

- ☐ Analysis conducted according to pre-registered analytic plan

- ☐ Any deviations from pre-registered plan documented and labelled as exploratory

- ☐ Software version and package versions recorded

- ☐ Analysis scripts commented and saved in version-controlled repository

- ☐ Effect sizes and confidence intervals reported for all primary outcomes

- ☐ Multiple comparison corrections applied where appropriate

Reporting Phase

- ☐ Appropriate reporting guideline selected and checklist completed

- ☐ Pre-registration link included in manuscript

- ☐ Data and code availability statement drafted

- ☐ All datasets deposited in appropriate repository with persistent identifier

- ☐ Full citation details for all instruments, datasets, and software provided

- ☐ Limitations section addresses threats to internal and external validity honestly

A PMC review of reproducibility progress (Schooler, 2014, PMCID: PMC5461896) identifies documentation completeness and open data sharing as the two interventions with the strongest evidence base for improving reproducibility rates across disciplines. This checklist operationalises both.

Frequently Asked Questions

What is research methodology and why does it matter for reproducibility?

Research methodology is the systematic framework governing study design, data collection, analysis, and reporting — documented in sufficient detail to permit independent replication. It matters for reproducibility because methodological decisions (sampling, measurement, analytic choices) are the primary source of variation between studies attempting to replicate the same finding. Transparent, pre-specified methodology dramatically reduces that variation and strengthens confidence in results.

What is the difference between quantitative and qualitative research methodology?

Quantitative research methodology uses numerical data and statistical analysis to test hypotheses and generalise findings, operating within a post-positivist epistemological framework. Qualitative methodology examines meaning, experience, and social context through non-numerical data (interviews, documents, observation), typically within constructivist or interpretivist frameworks. The choice between them depends on the research question: “how many” and “does X cause Y” questions call for quantitative approaches; “why” and “how do people experience” questions call for qualitative ones.

How do I choose the right research methodology for my study?

Start with your research question — the question itself usually implies a methodology. If you need to measure, test, or compare, quantitative methods apply. If you need to understand, interpret, or explore, qualitative methods apply. If the question demands both, mixed methods may be appropriate. Your epistemological assumptions, available resources, access to participants, and the existing evidence base all shape the final decision. Creswell and Creswell’s Research Design (5th ed., 2018) remains the standard reference for this decision process.

What is pre-registration and does it apply to qualitative research?

Pre-registration is the practice of documenting and publicly time-stamping your study’s hypotheses, design, and analytic plan before data collection begins — typically through platforms like the Open Science Framework (OSF) or AsPredicted. It’s most naturally applied to quantitative research, where hypotheses and analyses are fully specifiable in advance. Qualitative pre-registration is possible and increasingly encouraged: researchers document their analytic approach (e.g., thematic analysis, grounded theory), sampling strategy, and reflexive position without specifying hypotheses, since qualitative inquiry is exploratory by nature.

What are the main threats to internal validity in research methodology?

The classic threats to internal validity, identified by Campbell and Stanley (1963) and extended by Cook and Campbell (1979), include: history (external events during the study), maturation (participant changes over time), testing effects (repeated measurement effects), instrumentation changes, statistical regression to the mean, selection bias, and attrition. Experimental designs with randomisation, blinding, and control groups address most of these. Quasi-experimental and observational designs require careful analytic adjustments — matching, regression discontinuity, difference-in-differences — to manage the same threats.

How does sample size affect the reproducibility of research findings?

Sample size directly determines statistical power — the probability of detecting a true effect when it exists. Underpowered studies (those with insufficient sample sizes) are more likely to miss genuine effects and, counterintuitively, more likely to produce statistically significant results that don’t replicate, because any significant finding in a low-power study is more likely to be a false positive (Button et al., 2013, Nature Reviews Neuroscience). Conducting and reporting an a priori power analysis is the standard solution, using tools such as G*Power to determine the required sample size before data collection begins.

Build a Research Profile That Commands Credibility

Reproducible research methodology isn’t just about methodological hygiene — it’s about building a scholarly reputation that withstands scrutiny. Every pre-registration, every complete protocol, every shared dataset is a deposit in the credibility account that defines a research career.

If you found this resource useful, consider sharing it with your research group, department, or supervisor. University library guides, PhD cohort coordinators, and postgraduate research offices regularly curate methodological resources — this article is designed to be that kind of reference.

For PhD candidates working through the specific challenge of demonstrating methodological originality in a dissertation, the strategies in our guide on proving originality in doctoral dissertations extend what we’ve covered here into the specific demands of doctoral-level research. For those managing the citation infrastructure that makes research traceable and reproducible, the tools discussed in our resource on automated citation tools for academic work offer practical workflow solutions.

The Tesify platform supports researchers building rigorous, high-integrity academic work from the ground up. Explore how it can support your research methodology from protocol to publication.

References

Baker, M. (2016). 1,500 scientists lift the lid on reproducibility. Nature, 533(7604), 452–454. https://doi.org/10.1038/533452a

Button, K. S., Ioannidis, J. P. A., Mokrysz, C., Nosek, B. A., Flint, J., Robinson, E. S. J., & Munafò, M. R. (2013). Power failure: Why small sample size undermines the reliability of neuroscience. Nature Reviews Neuroscience, 14(5), 365–376. https://doi.

Leave a Reply