Research Methodology: The Complete 2026 Guide

Most researchers make the same costly mistake: they choose a methodology after they’ve already started collecting data. It’s the academic equivalent of building a house and deciding on the foundation later. The result? Rejected proposals, failed viva examinations, and papers that reviewers tear apart in under two minutes.

Research methodology is the structural backbone of every credible academic study. Get it right, and your work stands up to peer scrutiny. Get it wrong, and no amount of elegant prose will save you. This guide walks you through every major design decision — systematically, with the kind of precision your university expects.

What Is Research Methodology?

Here’s where most textbooks lose readers immediately — they conflate “research methodology” with “research methods,” treating them as interchangeable. They’re not.

The distinction matters enormously at the doctoral level. A PhD examiner isn’t simply asking “what did you do?” — they’re asking “why was this the most appropriate way to investigate your question, given your epistemological position?” That’s a methodology question.

According to Creswell and Creswell (2023) in Research Design: Qualitative, Quantitative, and Mixed Methods Approaches, a well-constructed methodology section should demonstrate alignment between five elements: the worldview or paradigm, the research design, the methods, the theoretical lens, and the research problem itself. When these five elements are coherent, reviewers rarely challenge your methodological choices.

The USC Libraries Research Writing Guide describes methodology as requiring explicit justification of why the chosen approach is “the most appropriate and rigorous way to address the research problem” — a standard adopted by most Anglo-American research institutions.

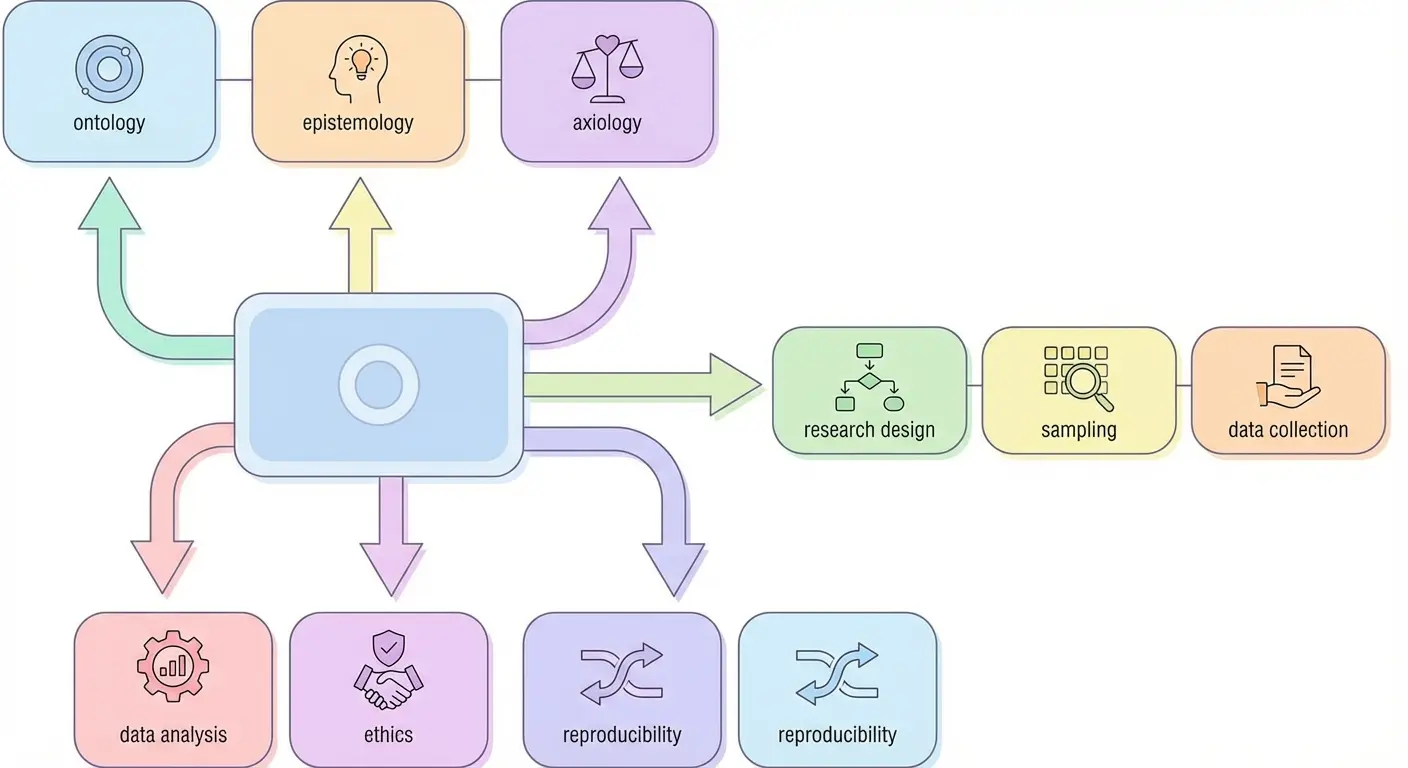

Research Paradigms: Ontology, Epistemology, and Axiology

Most PhD candidates who struggle with methodology chapters share one characteristic: they skipped the paradigm section because it felt abstract. That’s a mistake that surfaces later — usually at viva.

A research paradigm is the philosophical framework within which your entire study operates. It answers three foundational questions:

- Ontology: What is the nature of reality? Is there one objective truth, or multiple socially constructed realities?

- Epistemology: What counts as valid knowledge? Can a researcher stand outside the subject of study, or are they inevitably part of it?

- Axiology: What role do values play in research? Are they to be controlled and eliminated, or acknowledged and explored?

The four dominant paradigms in contemporary research are:

| Paradigm | Ontology | Epistemology | Typical Methods |

|---|---|---|---|

| Positivism | Single, objective reality | Observer independent | Surveys, experiments, RCTs |

| Interpretivism | Multiple, constructed realities | Researcher co-constructs meaning | Interviews, ethnography, grounded theory |

| Critical Realism | Real but theory-laden structures | Retroductive reasoning | Case studies, document analysis |

| Pragmatism | Contextually situated | What works best for the question | Mixed methods, action research |

One point most methodology guides don’t make clearly enough: your paradigm choice isn’t just philosophical window dressing. It constrains your entire analytical logic. A positivist study cannot validly draw on thematic analysis from interview data as its primary evidence base without a clear epistemological justification for that move.

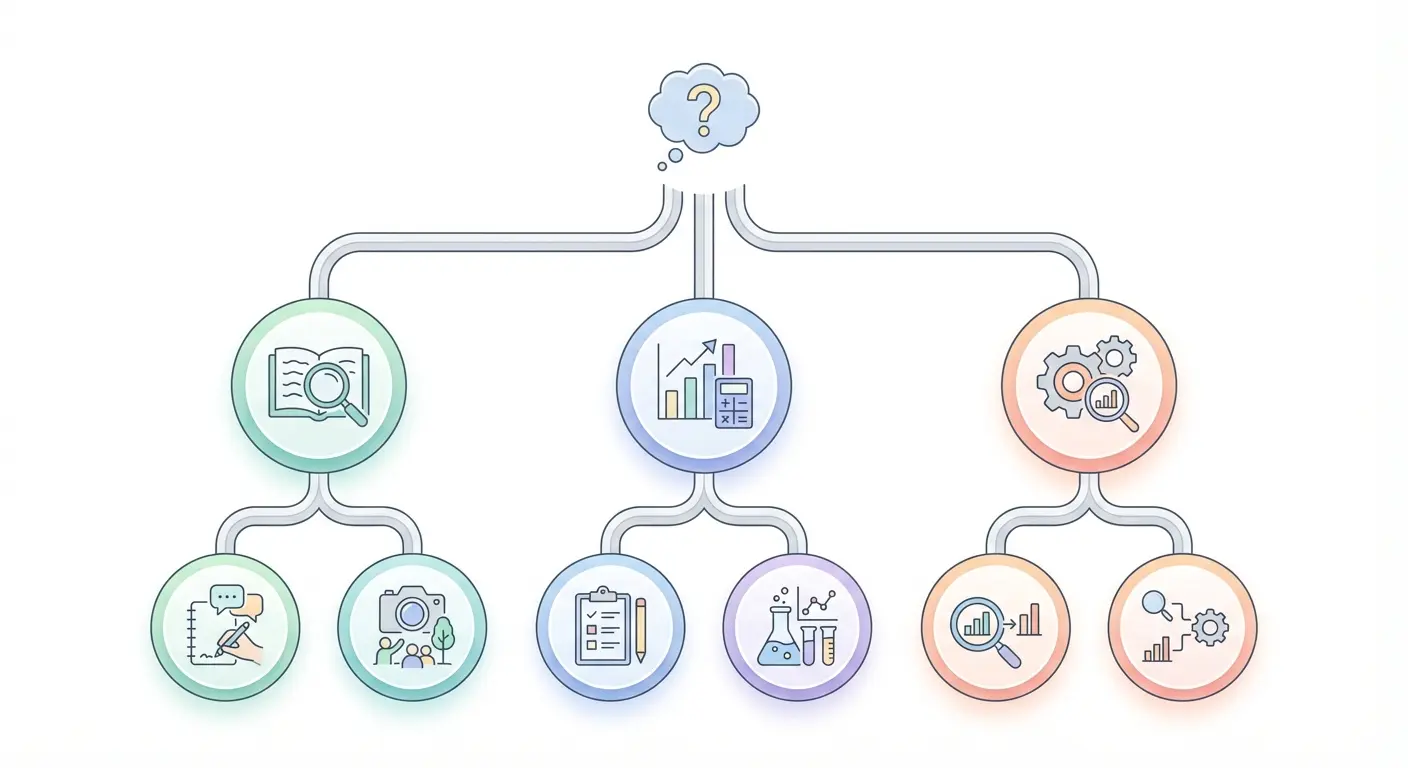

Qualitative, Quantitative, and Mixed Methods Designs

The classic “qual vs. quant” debate has largely been resolved in academic practice — the more important question is which approach best fits your research question. Still, the distinctions carry real methodological weight.

The UC Berkeley Library Research Methods guide offers a useful framing: quantitative research seeks to measure, count, and test hypotheses; qualitative research seeks to interpret, understand, and explore meaning; mixed methods combines both to address questions that neither approach alone can fully answer.

Quantitative Research Methodology

Quantitative designs operationalise variables, test hypotheses using inferential statistics, and aim for findings that generalise across populations. Key design types include experimental, quasi-experimental, cross-sectional, and longitudinal survey designs.

Sample size is one of the most mishandled elements in quantitative work. A statistically underpowered study isn’t just a minor flaw — it’s a structural failure that invalidates conclusions. The standard in most health and social science fields is 80% statistical power at α = .05, calculated before data collection begins (Cohen, 1992). Tools like G*Power (Heinrich Heine University Düsseldorf) and OpenEpi make this calculation accessible — there’s no excuse for skipping it.

Qualitative Research Methodology

Qualitative designs prioritise depth over breadth. Here, rigour isn’t measured by sample size but by the quality of data saturation, reflexivity, and transferability of findings. Lincoln and Guba’s (1985) criteria — credibility, transferability, dependability, and confirmability — remain the gold standard for evaluating qualitative trustworthiness.

Mixed Methods Research Methodology

Mixed methods designs gained significant institutional recognition after Creswell and Plano Clark’s foundational 2011 text and have been formalised through the Journal of Mixed Methods Research. The three dominant designs are explanatory sequential, exploratory sequential, and convergent parallel — and each carries specific implications for how you sequence data collection and analysis phases.

Core Research Design Types Explained

Choosing between a case study and a grounded theory isn’t arbitrary — each design carries embedded assumptions about what kind of knowledge is being produced and how it should be validated.

Here are the designs most frequently examined in doctoral methodology chapters:

| Design | Paradigm Fit | Primary Purpose | Key Theorists |

|---|---|---|---|

| Grounded Theory | Interpretivist | Theory generation from data | Glaser & Strauss, Charmaz |

| Phenomenology | Interpretivist | Lived experience of a phenomenon | Husserl, van Manen, Moustakas |

| Case Study | Pragmatist / Critical Realist | In-depth contextual understanding | Yin, Stake, Merriam |

| Ethnography | Interpretivist | Cultural and social practice | Geertz, Hammersley, Atkinson |

| Experimental | Positivist | Causal inference, hypothesis testing | Campbell & Stanley, Shadish |

| Action Research | Pragmatist | Practical improvement through cycles | Lewin, Kemmis, McNiff |

What most methodology chapters miss is an explicit rationale for rejection — not just arguing why you chose your design, but briefly explaining why the obvious alternatives were less appropriate. A PhD examiner marking your chapter as “not fully justified” is almost always signalling this gap.

Sampling Strategies and Sample Size Determination

Sampling is one of those methodology decisions where the gap between what’s taught in undergraduate methods courses and what’s actually acceptable in doctoral research is surprisingly wide.

The fundamental principle: your sampling strategy must match your research design’s underlying logic. Probability sampling (random, stratified, cluster) suits quantitative designs where statistical generalisation is the goal. Non-probability sampling (purposive, snowball, theoretical) suits qualitative designs where analytical generalisation is the aim.

Calculating Sample Size for Quantitative Studies

For quantitative work, the sequence is non-negotiable:

- Specify the effect size: What magnitude of difference or relationship are you trying to detect? Use prior literature or a conservative estimate (Cohen’s d = 0.5 is “medium” in most social science contexts).

- Set significance level (α): Conventional threshold is α = .05, though some fields require .01.

- Set desired power (1 − β): 0.80 is the standard minimum; 0.90 is preferred for confirmatory research.

- Run the power analysis: Use G*Power or OpenEpi before data collection begins, not after.

- Account for attrition: Add 10–20% to the calculated N to compensate for dropout, incomplete responses, or data exclusions.

If power analysis is new to you, the StatQuest YouTube explanation — Power Analysis, Clearly Explained — is genuinely one of the clearest resources available, without the jargon overload of most textbook treatments.

Determining Sample Size for Qualitative Studies

Qualitative sample size is guided by theoretical saturation — the point at which new data no longer generates new categories or insights. Guest, Bunce, and Johnson’s (2006) empirical study found that saturation typically occurred within 12 interviews when the sample was homogeneous, rising to 20–30 for more heterogeneous populations. These figures are widely cited, though actual saturation varies significantly by topic complexity and data richness.

Data Collection Instruments and Validity

An instrument that hasn’t been validated is a liability, not an asset. This is true regardless of whether you’re designing a Likert-scale survey, a semi-structured interview guide, or an observational coding scheme.

Validity in research methodology has four dimensions that examiners and reviewers expect you to address:

- Content validity: Does the instrument adequately cover the theoretical construct? Established through expert review panels and systematic content validity ratios (CVR).

- Construct validity: Does it actually measure what it claims to measure? Assessed through confirmatory factor analysis (CFA) or convergent/discriminant validity testing.

- Criterion validity: Does performance on the instrument correlate with an established external criterion? Includes concurrent and predictive validity.

- Face validity: Does the instrument appear to measure what it intends to, from the respondent’s perspective? Weakest form of validity, but still reported.

For qualitative work, the parallel framework uses Lincoln and Guba’s (1985) trustworthiness criteria: member checking for credibility, thick description for transferability, audit trails for dependability, and reflexivity statements for confirmability.

One area frequently underreported: pilot testing. Running your instrument with a small sample (n = 5–10 for qualitative; n = 20–30 for quantitative) before main data collection isn’t optional — it’s a quality assurance standard that reviewers now routinely check for. When planning your dissertation’s data instruments and timeline, tools discussed in our guide on planning and structuring a dissertation with intelligent tools can help you build instrument development milestones into your research protocol systematically.

Research Ethics, Integrity, and IRB Standards

Research ethics isn’t a box-ticking exercise — it’s a substantive component of methodological rigour that shapes every stage of your study design.

The three foundational principles from the Belmont Report (1979) — respect for persons (autonomy and informed consent), beneficence (maximising benefit, minimising harm), and justice (fair distribution of research burdens and benefits) — remain the cornerstone of IRB and ethics committee review in the US, UK, Australia, Canada, and Ireland.

What’s changed in 2025–2026 is the scope of ethics review. Three areas have received significant institutional attention:

- AI-assisted data collection and analysis: Many IRBs now require explicit disclosure if AI tools were used in coding qualitative data, screening participants, or generating synthetic datasets.

- Secondary data use: Using datasets from platforms like Twitter/X, Reddit, or commercial data brokers now routinely triggers ethics review, even when data is technically “public.”

- Participant anonymisation in qualitative research: With small purposive samples, full anonymisation is sometimes impossible — and ethics frameworks now require active discussion of this limitation rather than a blanket claim of anonymity.

Academic integrity extends beyond participant protection to research reporting. Researchers working on originality claims and contribution framing will find the evidence-based strategies in our piece on proving originality in doctoral dissertations directly applicable to the ethics of intellectual contribution — specifically around how you situate your work relative to existing scholarship without misrepresentation.

Reproducibility and Open Science in 2026

The replication crisis of the 2010s permanently changed how journals and institutions evaluate methodological quality. What was once considered good practice — pre-registration, data sharing, transparent reporting — is increasingly a publication requirement.

A 2024 survey published in Frontiers in Computer Science found that reproducible research policies vary widely across scientific computing journals, but the trend toward mandatory data and code sharing is accelerating, with 64% of surveyed journals having adopted some form of reproducibility policy by 2024, up from under 30% in 2018.

Practical reproducibility standards now expected in many disciplines include:

- Pre-registration of hypotheses and analysis plans on OSF (Open Science Framework) or AsPredicted before data collection

- FAIR data principles (Findable, Accessible, Interoperable, Reusable) for dataset deposition

- Registered Reports format, where peer review occurs before data collection, reducing publication bias

- Full reporting of all statistical tests run, including non-significant results, to prevent selective reporting

Nature Methods published updated guidance in 2025 on reporting methods for reusability, emphasising that method sections must provide sufficient detail for independent replication — a standard that now applies not just to laboratory science but to computational social science and qualitative research protocols alike.

Proper citation management is a direct component of reproducibility. When your references are formatted incorrectly or sources are incorrectly attributed, it undermines confidence in your entire study. For researchers working within German and European academic systems, our resource on automatic citation tools for academic work covers how to maintain citation accuracy across large reference lists — a practical necessity for any researcher managing 200+ sources.

How to Write the Methodology Chapter

The methodology chapter is the one section of a dissertation or thesis that examiners read before looking at your findings. It’s the lens through which all subsequent chapters are judged.

Here’s the standard architecture that PhD supervisors at Russell Group, Ivy League, Group of Eight, and U15 universities consistently recommend:

- Chapter introduction (1 paragraph): Briefly orient the reader to what this chapter covers and why — don’t just say “this chapter describes the methodology.” State what methodological approach you took and why it was appropriate.

- Research philosophy: State your paradigmatic position (positivism, interpretivism, etc.) and justify it in relation to your research questions. Two to three paragraphs, cited to foundational methodology texts.

- Research approach: Inductive, deductive, or abductive reasoning? Each has a different logic of inference that connects to your design choices.

- Research design: Experimental, case study, survey, ethnography, etc. — with full justification.

- Research strategy: Specific method of inquiry within your design.

- Time horizon: Cross-sectional vs. longitudinal — and why this fits your research question.

- Sampling strategy: Who, how many, and how selected — with power analysis or saturation criteria.

- Data collection instruments: How developed, validated, and piloted.

- Data analysis procedures: Precisely how you will analyse the data — software, statistical tests, coding frameworks, analytical frameworks.

- Validity and reliability / trustworthiness: How rigour was assured throughout.

- Ethical considerations: IRB/ethics committee approval, consent, anonymisation, data storage.

- Limitations: Honest acknowledgment of design limitations and their implications.

If you’re at the proposal stage, the Grad Coach’s video guide on how to write a research proposal for a dissertation or thesis offers a clear, worked example of how methodology reasoning is framed at proposal stage versus the full dissertation chapter.

Practical Research Methodology Checklist

Use this checklist before submitting your methodology chapter or research proposal. Each item corresponds to a criterion that examiners and reviewers commonly cite in revision requests.

Research Methodology Pre-Submission Checklist

Philosophical Foundation

- ☐ Research paradigm explicitly named and defined (positivism / interpretivism / critical realism / pragmatism)

- ☐ Ontological and epistemological positions stated with citations

- ☐ Axiology addressed (value-laden or value-free stance)

Research Design

- ☐ Design type identified and justified in relation to research questions

- ☐ Alternative designs considered and reasons for rejection provided

- ☐ Approach (inductive / deductive / abductive) stated and justified

- ☐ Time horizon (cross-sectional / longitudinal) justified

Sampling

- ☐ Sampling strategy named and justified (probability vs. non-probability)

- ☐ Sample size calculation documented (power analysis or saturation criteria)

- ☐ Inclusion and exclusion criteria clearly defined

- ☐ Attrition rate accounted for in quantitative studies

Instruments and Data Collection

- ☐ Instruments described in full detail (construction, validation, sources)

- ☐ Pilot testing documented

- ☐ Data collection procedures replicable as described

Analysis

- ☐ Analysis procedures specified (software, statistical tests, coding approach)

- ☐ Inter-rater reliability addressed for coded data

- ☐ All planned analyses pre-registered or fully described

Ethics and Integrity

- ☐ Ethics approval obtained and referenced (IRB / ethics committee reference number)

- ☐ Informed consent procedures described

- ☐ Data storage and retention protocols documented

- ☐ AI tool use disclosed if applicable

Rigour and Reproducibility

- ☐ Validity / trustworthiness criteria addressed

- ☐ Limitations acknowledged with implications discussed

- ☐ Pre-registration link provided if applicable

- ☐ Data and materials available for sharing (FAIR principles)

Frequently Asked Questions

What is the difference between research methodology and research methods?

Research methodology is the philosophical and theoretical framework that justifies why specific research methods were chosen — it encompasses paradigm, design logic, and epistemological assumptions. Research methods are the practical tools and procedures used to collect and analyse data, such as surveys, interviews, or statistical tests. Think of methodology as the strategy and methods as the tactics.

What are the main types of research methodology?

The main types are quantitative (hypothesis testing, numerical data, statistical analysis), qualitative (exploratory, interpretive, non-numerical data), and mixed methods (combining both). Within these broad categories, specific designs include experimental, case study, grounded theory, phenomenology, ethnography, survey, and action research — each suited to different research questions and epistemological positions.

How do I choose the right research methodology for my study?

Start with your research question — it drives every methodology decision. If you’re testing a hypothesis or measuring relationships between variables, a quantitative approach is likely appropriate. If you’re exploring meaning, experience, or social processes, qualitative designs fit better. If your question has both dimensions, mixed methods may be warranted. Then align your philosophical position (paradigm) with your design choice to ensure internal coherence across the methodology chapter.

How long should a dissertation methodology chapter be?

For a doctoral dissertation, the methodology chapter typically runs 8,000–12,000 words, representing roughly 15–20% of the total word count. Master’s dissertations usually require 3,000–5,000 words for methodology. The length should be determined by the complexity of the design and the level of justification required — not by arbitrary targets. A quantitative RCT or a multi-phase mixed methods study will require more space than a straightforward cross-sectional survey.

What is research validity and how is it assessed in methodology?

Research validity refers to the accuracy and credibility of your study’s findings and instruments. In quantitative research, the key dimensions are internal validity (whether observed effects are actually caused by your independent variable), external validity (generalisability), construct validity (whether instruments measure the intended constructs), and statistical conclusion validity. In qualitative research, the parallel concept is trustworthiness, assessed through Lincoln and Guba’s criteria: credibility, transferability, dependability, and confirmability.

Is pre-registration required for research methodology in 2026?

Pre-registration is not universally required, but it is increasingly expected in psychology, medicine, education, and social sciences — particularly for confirmatory quantitative studies. Many journals now offer a Registered Reports format where pre-registration is mandatory. Even where not required, pre-registration via OSF or AsPredicted strengthens your methodology’s credibility by demonstrating that hypotheses and analysis plans were specified in advance of data collection.

Build a Methodology That Holds Up Under Scrutiny

Methodological weakness is the single most common reason for doctoral revisions, paper rejections, and grant proposal failures. The frameworks and checklists in this guide represent current best practice across Anglo-American research institutions — but applying them to your specific study requires careful, systematic work.

If you’re working on a dissertation or research proposal, explore how intelligent planning tools can help you structure your research design, track your literature review, and maintain methodological consistency across chapters:

- Planning and structuring a dissertation with intelligent tools — systematic project management for your research design workflow

- Proving originality in doctoral dissertations — how to frame your theoretical contribution and research gap

- Automatic citation tools for academic work — maintaining referencing accuracy throughout your study

Share this guide with fellow researchers and PhD candidates — and if you work in a university library or research centre, we welcome you to link to it as a reference resource.

Key References

- Belmont Report. (1979). Ethical principles and guidelines for the protection of human subjects of research. National Commission for the Protection of Human Subjects of Biomedical and Behavioral Research.

- Charmaz, K. (2014). Constructing grounded theory (2nd ed.). Sage.

- Cohen, J. (1992). A power primer. Psychological Bulletin, 112(1), 155–159. https://doi.org/10.1037/0033-2909.112.1.155

- Creswell, J. W., & Creswell, J. D. (2023). Research design: Qualitative, quantitative, and mixed methods approaches (6th ed.). Sage.

- Creswell, J. W., & Plano Clark, V. L. (2011). Designing and conducting mixed methods research (2nd ed.). Sage.

- Frontiers in Computer Science. (2024). Reproducible research policies and software/data management in scientific computing journals. Frontiers in Computer Science. https://www.frontiersin.org/articles/10.3389/fcomp.2024.1491823/full

- Guest, G., Bunce, A., & Johnson, L. (2006). How many interviews are enough? Field Methods, 18(1), 59–82.

- Lincoln, Y. S., & Guba, E. G. (1985). Naturalistic inquiry. Sage.

- Nature Methods. (2025). Reporting methods for reusability. Nature Methods. https://www.nature.com/articles/s41592-025-02615-4

Leave a Reply