Systematic Literature Review: The Complete 2026 Guide

Most researchers treat a systematic literature review as a box-ticking exercise — a hurdle before the “real” research begins. That’s a costly misunderstanding. A well-executed systematic literature review isn’t just a summary of what exists; it’s a methodologically rigorous research output in its own right, one that can define entire fields, redirect funding priorities, and earn thousands of citations on its own merits. Research methodology and citation standards for higher education are not peripheral concerns here — they are the backbone of every credible review.

The problem is that most guides stop at “define your question and search some databases.” The gap between that advice and actually producing a review that meets PRISMA 2020 standards, survives peer review, and holds up under scrutiny from a doctoral committee is enormous. This guide closes that gap.

What Is a Systematic Literature Review?

The definition matters because it sets systematic reviews apart from almost every other academic document a researcher produces. Your undergraduate dissertation literature review was almost certainly a narrative review — you read what you found, cited what supported your argument, and moved on. Nothing wrong with that for its purpose. But a systematic review holds itself to a different standard entirely.

The Cochrane Collaboration, which has arguably done more to formalise systematic review methodology than any other institution, defines the process as one that “uses explicit, systematic methods that are selected with a view to minimising bias, thus providing more reliable findings from which conclusions can be drawn and decisions made” (Cochrane Handbook for Systematic Reviews of Interventions). That phrase — minimising bias — is the entire philosophical engine of the method.

Here’s what most people miss about systematic reviews: the process of not finding evidence is itself a finding. A well-conducted SLR that concludes “there is insufficient evidence to support X” contributes enormously to a field. It tells funders where to invest, tells clinicians where to proceed with caution, and tells future researchers exactly which gap to fill.

Systematic reviews are used across disciplines — from clinical medicine and public health (where Cochrane and Campbell Collaboration reviews are regarded as the gold standard) to education research, social policy, environmental science, and increasingly, business and management studies. The method is domain-agnostic; only the specific tools and quality appraisal instruments shift between fields.

SLR vs. Narrative, Scoping, and Meta-Analysis

Choosing the wrong review type is one of the most common — and most damaging — errors a researcher makes early in a project. Doctoral supervisors regularly see students propose a “systematic review” when what they actually need is a scoping review, or vice versa. The distinction has real methodological consequences.

| Review Type | Purpose | Exhaustive Search? | Quality Appraisal? | Typical Output |

|---|---|---|---|---|

| Systematic Literature Review | Answer a specific research question with synthesis | Yes | Yes (critical) | Evidence synthesis, recommendations |

| Narrative Review | Summarise and discuss a broad topic | No | Rarely | Overview, commentary |

| Scoping Review | Map extent, range, and nature of evidence | Yes | Optional | Evidence map, knowledge gaps |

| Meta-Analysis | Statistically pool results from multiple studies | Yes | Yes (mandatory) | Pooled effect size, forest plots |

| Rapid Review | Time-limited synthesis for policy/practice | Partial | Abbreviated | Expedited evidence brief |

The scoping review deserves special attention because its profile has risen sharply since the JBI (Joanna Briggs Institute) updated its methodological guidance. For an excellent overview of how scoping and systematic reviews differ in practice, the JBI guidance on conducting and reporting scoping reviews is the most current authoritative resource available.

A meta-analysis, meanwhile, is technically a type of systematic review — it layers a statistical synthesis on top of the standard systematic review process. You can’t run a meta-analysis without first completing a rigorous systematic search and quality appraisal. What you can do is complete a systematic review without running a meta-analysis, particularly when studies are too heterogeneous to pool statistically.

Research Methodology and Citation Standards in SLRs

Research methodology and citation standards for higher education aren’t just background knowledge for a systematic review — they’re operational requirements. Every included study in your SLR must be cited accurately and consistently, and the reporting standards you follow (PRISMA, AMSTAR, GRADE) are themselves methodological documents that require precise citation.

The four dominant citation systems — APA 7th, MLA 9th, Chicago 17th, and Harvard — each handle systematic review citations slightly differently, particularly for database searches, grey literature, and preregistered protocols. Our dedicated guide on research methodology and citation standardisation covers the specific formatting requirements for each system in detail, including how to cite PROSPERO registrations and unpublished datasets.

For the systematic review specifically, citation accuracy takes on extra weight. Citation manipulation — the practice of inappropriately inflating citation counts — is a documented problem in academic publishing. The COPE Discussion Document on Citation Manipulation (2019) identifies patterns like coercive citation, self-citation rings, and citation stacking that distort the literature your SLR is trying to faithfully represent.

What this means practically: when you’re extracting data from included studies, document exactly which citation system each source uses, and standardise everything to your own protocol before synthesis. Reference managers like Zotero (discussed below) make this tractable at scale. Without one, managing 200+ references consistently is genuinely difficult.

The broader research methodology context matters too. Your SLR sits within an epistemological framework — whether positivist, interpretivist, or pragmatic — that shapes which quality appraisal tools are appropriate. For a fuller treatment of how paradigm choice interacts with study design decisions, the 2026 Research Methodology Guide provides the foundational grounding that SLR authors often need before they can make defensible protocol decisions.

PRISMA 2020: The Reporting Standard You Cannot Ignore

PRISMA — Preferred Reporting Items for Systematic Reviews and Meta-Analyses — is the reporting standard that most journals now require for systematic review submissions. The 2020 update was substantial, adding items related to study registration, searching methods, and evidence certainty that weren’t in the original 2009 statement.

The updated statement, published in The BMJ in 2021, introduced a revised 27-item checklist and a new flow diagram that accounts for studies identified through database searching separately from those found through other methods (Page et al., 2021). The full paper is available at The BMJ: PRISMA 2020 statement and is essential reading before you submit to any indexed journal.

Here’s where it gets interesting: PRISMA compliance isn’t just about satisfying reviewers. The checklist functions as a quality control framework during the review process itself. Teams that complete the PRISMA checklist prospectively — at the protocol stage rather than retrospectively before submission — consistently produce better-structured reviews with fewer methodological gaps.

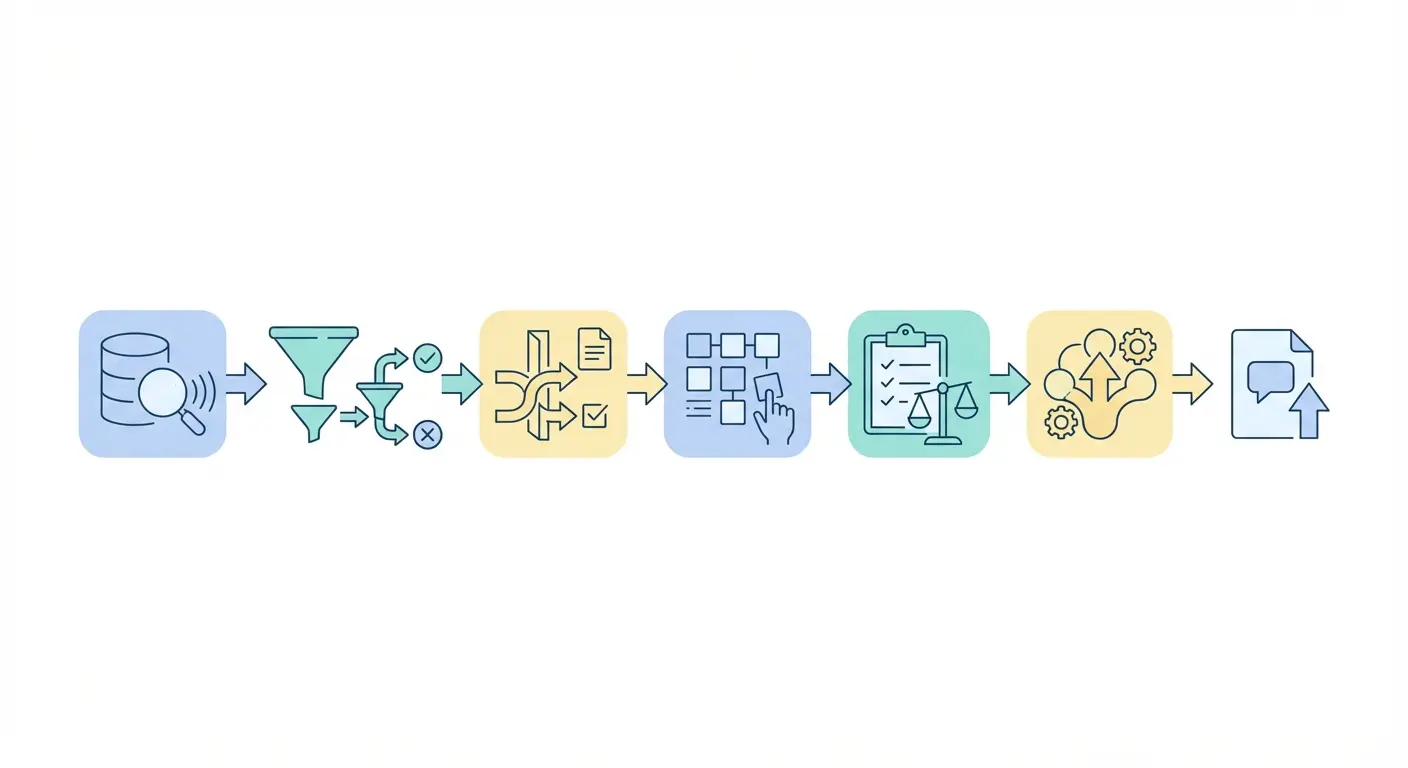

The PRISMA flow diagram — the funnel graphic showing records identified, screened, assessed, and included — is one of the most recognised figures in academic publishing. Getting it right means tracking every number at every stage, which is why you need to set up your screening tool before you run your first search, not after.

Step-by-Step SLR Process: From Protocol to Publication

A systematic literature review follows a sequence that cannot be reversed. Unlike qualitative research, where some iteration between data collection and analysis is expected, the SLR process is deliberately linear — any deviation from the pre-registered protocol must be declared and justified. Here’s the sequence that holds up to scrutiny.

- Formulate your research question. Use the PICO (Population, Intervention, Comparator, Outcome) framework for clinical questions, or SPIDER (Sample, Phenomenon of Interest, Design, Evaluation, Research type) for qualitative reviews. A poorly defined question is the most common reason SLRs fail at peer review.

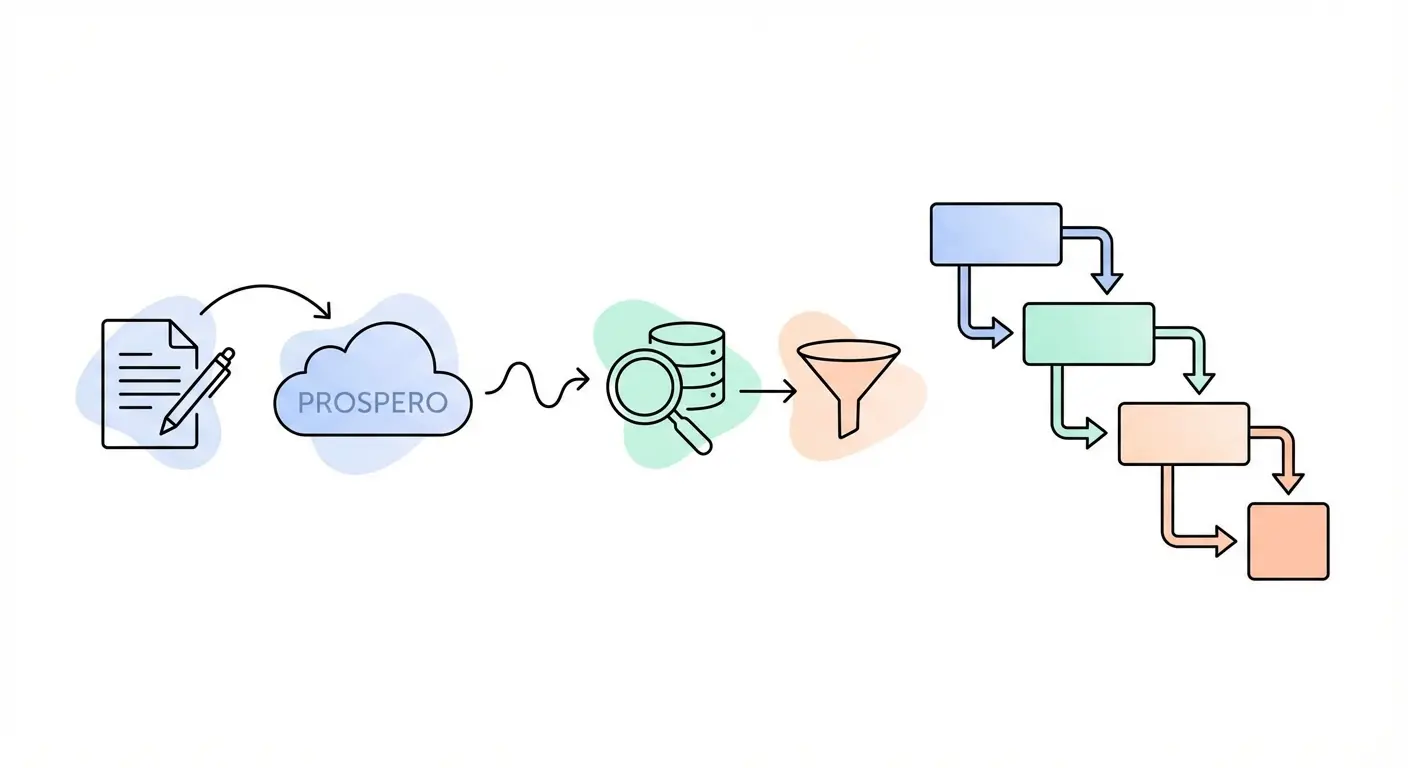

- Draft and register your protocol. Submit your protocol to PROSPERO (International Prospective Register of Systematic Reviews) before running your searches. Registration creates a public, timestamped record of your methods and prevents outcome-switching accusations. PROSPERO is free and accepts registrations from most disciplines.

- Define eligibility criteria. Specify inclusion and exclusion criteria using the same framework as your question (PICO/SPIDER). Criteria must be objective — avoid vague terms like “high-quality studies” without defining what that means operationally.

- Develop and run your search strategy. Search at minimum 3–5 relevant databases, plus grey literature sources. Document every search string, database, date, and filter applied. Consult a subject librarian — this is one of those steps that pays back tenfold.

- Screen titles and abstracts (Stage 1). Two independent reviewers screen all retrieved records. Disagreements are resolved by consensus or a third reviewer. Use a dedicated screening tool to manage this at scale.

- Screen full texts (Stage 2). Retrieve and assess full texts of all records that passed Stage 1. Record reasons for exclusion at this stage — PRISMA 2020 requires this.

- Extract data from included studies. Use a pre-piloted data extraction form. Extract study characteristics, methods, results, and quality indicators. Double-extraction reduces error.

- Appraise study quality. Apply a validated appraisal tool appropriate to your study designs: CASP for qualitative studies, Cochrane RoB 2 for randomised trials, ROBINS-I for non-randomised studies, or AXIS for cross-sectional studies.

- Synthesise findings. Depending on data type and heterogeneity: narrative synthesis, thematic synthesis, meta-analysis, or framework synthesis. Choose before you see the data — post-hoc synthesis decisions are a bias risk.

- Write up and report. Complete the PRISMA 2020 checklist. Report your search strings in full (at least one database), your PROSPERO registration number, and all deviations from your registered protocol with reasons.

Database Search Strategy and Boolean Logic

Your search strategy is arguably the most technically demanding component of a systematic review — and the one most often done badly. A poorly constructed search that misses 30% of the relevant literature doesn’t just weaken your review; it invalidates it.

The foundation is Boolean logic: combining search terms using AND, OR, and NOT operators. OR expands your search (synonyms, alternate spellings), AND narrows it (combining concepts), and NOT excludes irrelevant material (used sparingly — it’s easy to accidentally exclude relevant records). Truncation (asterisk: educat* retrieves educate, education, educational) and phrase searching (inverted commas) refine results further.

Which databases you search depends on your discipline, but a defensible minimum for most fields includes:

- MEDLINE via PubMed — biomedical and health sciences

- Embase — pharmacological and clinical research

- CINAHL — nursing and allied health

- PsycINFO — psychology and behavioural sciences

- Web of Science — multidisciplinary, strong citation data

- Scopus — broad coverage, strong in STEM

- Google Scholar — grey literature and preprints (not a primary database, but useful supplementary source)

- JSTOR — humanities and social sciences

Grey literature — unpublished studies, conference proceedings, government reports, theses, and preprints — is essential for reducing publication bias. Studies with null or negative findings are less likely to be published in peer-reviewed journals, so excluding grey literature systematically skews your synthesis toward positive results. Search ProQuest Dissertations, OpenGrey, and relevant institutional repositories.

Peer-review your search strategy before running it. The PRESS checklist (Peer Review of Electronic Search Strategies) provides a structured framework for a second librarian or colleague to assess your strategy. This step is now recommended by PRISMA 2020 and required by several high-impact journals.

Screening, Data Extraction, and Quality Appraisal

Screening hundreds or thousands of records is where systematic reviews most often collapse under their own weight. The intellectual challenge is real — maintaining consistent application of eligibility criteria across 1,500 titles and abstracts, often weeks apart and with a second reviewer who may interpret borderline cases differently, demands a level of process discipline that most researchers haven’t needed before.

The answer is structure — specifically, using a validated screening tool rather than a spreadsheet. The most widely used free tool is Rayyan, a purpose-built systematic review screening application that supports blinded dual screening, conflict identification, and PRISMA flow diagram generation. It integrates with most reference managers and exports data in formats compatible with statistical software.

Inter-rater reliability — the degree of agreement between two independent screeners — should be calculated and reported. Cohen’s Kappa (κ) is the standard metric: κ above 0.6 indicates substantial agreement; κ below 0.4 suggests your eligibility criteria need sharpening. Low agreement at title/abstract screening isn’t a failure — it’s diagnostic information that tells you to clarify criteria before the full-text stage.

Data extraction forms should be piloted on 5–10 included studies before full deployment. What looks obvious on paper (“extract the sample size”) becomes ambiguous fast — is that the randomised sample, the analysed sample, or the sample at follow-up? Pre-piloting surfaces these ambiguities before they corrupt your dataset.

Quality appraisal is separate from data extraction and serves a different function. You’re not excluding studies for being “low quality” (that’s a different decision, made at the eligibility stage). You’re assessing risk of bias and weighting your conclusions accordingly. A well-conducted SLR reports quality appraisal results transparently and discusses how methodological limitations of included studies affect confidence in the overall evidence.

Citation Management and Reference Tools for Systematic Reviews

Managing 2,000 retrieved records, deduplicating across databases, tracking screening decisions, and maintaining a final reference list that’s formatted consistently — none of this is feasible by hand. Reference management software isn’t optional for a systematic review; it’s load-bearing infrastructure.

Zotero is the most widely recommended free option for systematic reviewers, and for good reason. It handles deduplication well, integrates directly with web browsers for capturing records from Google Scholar, PubMed, and library databases, and exports to RIS and BibTeX formats compatible with Rayyan and statistical software. The group library feature supports collaborative reviews where multiple team members need shared access to the reference pool.

Endnote remains the institutional standard at many universities, particularly for health sciences research, and has more sophisticated deduplication algorithms. Mendeley’s social features are useful but less relevant for systematic review work. The choice matters less than the discipline of using one consistently from the moment you run your first search.

Deduplication deserves special attention. When you search six databases, the same paper often appears multiple times — identified in Web of Science, Scopus, PubMed, and Google Scholar as separate records. Your PRISMA flow diagram reports “records after duplicates removed,” and that number needs to be accurate. Automated deduplication tools (Zotero’s built-in function, or specialised tools like ASySD — Automated Systematic Search Deduplication) catch most but not all duplicates; manual checking of flagged near-duplicates is still necessary.

For the citation formatting requirements specific to each major style — whether APA 7th, MLA 9th, Chicago, or Harvard — and how they apply to systematic review reporting, the Purdue OWL Research and Citation Resources remains the most accessible and regularly updated free reference for English-language researchers.

Reproducibility, Preregistration, and Academic Integrity in SLRs

The reproducibility crisis has touched systematic reviews as much as primary research — perhaps more so, given that an irreproducible systematic review poisons downstream evidence. A 2019 study in Research Synthesis Methods found that fewer than 15% of systematic reviews provided sufficient methodological detail to allow full replication (Polanin et al., 2019). That figure is both alarming and fixable.

Preregistration is the most powerful single intervention. When you register your protocol with PROSPERO before searching, you create a public commitment to your methods that makes outcome-switching visible. If your registered protocol said you’d use random effects meta-analysis and you later switched to a narrative synthesis, you must declare and justify that change in your published paper. This transparency is uncomfortable for researchers who discover that the data “doesn’t work” the way they hoped — which is precisely the point.

Protocol deviations aren’t automatically disqualifying. Life is complicated: databases change their interfaces, included study designs turn out to be more heterogeneous than anticipated, a key outcome measure turns out not to be reported consistently across studies. What matters is that deviations are declared, dated, and explained. Unexplained deviations are a red flag for reviewers and a source of legitimate criticism.

Our resource on research methodology and reproducibility covers data management practices, transparent reporting principles, and version control approaches that apply directly to systematic review teams — particularly those working collaboratively across institutions.

Academic integrity in the SLR context also means vigilance about the studies you include. Retracted papers remain in databases, sometimes for years after retraction. Check every included study against the Retraction Watch database before finalising your synthesis. Including a retracted study in a systematic review — even unknowingly — is an integrity issue that has led to high-profile post-publication corrections.

The SLR Author’s Checklist: 2026 Edition

This checklist is designed to function as a working document, not a retrospective exercise. Print it. Use it at each stage. Revisit it before submission.

Protocol Stage

- ☐ Research question formulated using PICO, SPIDER, or equivalent framework

- ☐ Protocol drafted including eligibility criteria, search strategy outline, data extraction plan, and synthesis method

- ☐ Protocol registered on PROSPERO (record the registration number)

- ☐ Ethical approval obtained if required by institution

- ☐ Team roles assigned: lead reviewer, second reviewer, third reviewer for conflicts

Search Stage

- ☐ Subject librarian consulted and search strategy peer-reviewed (PRESS checklist)

- ☐ Minimum 3–5 databases searched, plus grey literature sources

- ☐ All search strings, filters, and dates documented in full

- ☐ Records imported to reference manager and deduplicated

- ☐ Total records before and after deduplication recorded for PRISMA flow diagram

Screening Stage

- ☐ Dual independent screening of titles/abstracts completed

- ☐ Inter-rater reliability calculated (Cohen’s Kappa or percentage agreement)

- ☐ Conflicts resolved by consensus or third reviewer

- ☐ Full texts retrieved for all Stage 1 inclusions

- ☐ Dual full-text screening completed; reasons for exclusion recorded

Data Extraction and Appraisal Stage

- ☐ Data extraction form piloted on 5–10 studies and refined

- ☐ Dual extraction completed for all included studies

- ☐ Validated quality appraisal tool selected and applied

- ☐ Risk of bias assessed and documented for each included study

- ☐ All included studies checked against Retraction Watch database

Synthesis and Reporting Stage

- ☐ Synthesis method matches pre-registered protocol (or deviations declared)

- ☐ PRISMA 2020 checklist completed item-by-item

- ☐ PRISMA flow diagram generated with accurate numbers at each stage

- ☐ Full search string for at least one database included in manuscript or supplement

- ☐ PROSPERO registration number reported in abstract or methods

- ☐ All citations formatted consistently using chosen style (APA 7th, Chicago, etc.)

- ☐ GRADE framework applied if making clinical or policy recommendations

Frequently Asked Questions

How long does a systematic literature review take to complete?

A rigorous systematic literature review typically takes 6–18 months from protocol registration to manuscript submission, depending on the breadth of the research question, volume of retrieved records, and team size. Smaller scoped reviews with experienced teams can be completed in 6 months; broad reviews covering multiple databases and thousands of records regularly take 12–18 months. Budget time honestly — underestimating is the most common planning error.

What is the difference between a systematic review and a meta-analysis?

A systematic review is a structured synthesis of evidence using transparent, reproducible methods. A meta-analysis is a statistical technique applied within a systematic review to pool quantitative results across studies into a single effect estimate. All meta-analyses are built on systematic reviews, but not all systematic reviews include a meta-analysis — statistical pooling is only appropriate when included studies are sufficiently similar in population, intervention, and outcome.

Do I need to register my systematic review protocol with PROSPERO?

PROSPERO registration is strongly recommended and increasingly required by high-impact journals, though it remains formally optional. Registration provides a timestamped public record of your methods before data collection, protecting you against accusations of outcome-switching and reducing the risk of unintentional duplication of existing reviews. Register before running your searches — post-hoc registration provides significantly weaker methodological protection and is flagged by many journals.

How many databases should I search for a systematic review?

The PRISMA 2020 guidance recommends searching multiple databases to ensure comprehensive coverage — a defensible minimum is 3–5 discipline-relevant databases plus at least one grey literature source. For health sciences, searching only MEDLINE misses approximately 30–50% of relevant evidence available in Embase or CINAHL. Consult a subject librarian to identify the most appropriate databases for your specific topic; the right combination depends heavily on your research question and discipline.

Can a single researcher conduct a systematic review alone?

PRISMA 2020 and Cochrane guidance both recommend dual independent screening and data extraction to reduce bias — this is difficult for a sole reviewer to achieve credibly. Solo systematic reviews are conducted in practice (particularly by doctoral candidates under resource constraints), but journals increasingly require disclosure of single-reviewer screening and treat it as a methodological limitation. If working alone, use rigorous piloting of eligibility criteria, document all decisions, and consider consulting a second reviewer for a random 10–20% sample to estimate reliability.

What citation style should I use for a systematic review?

The citation style for a systematic review is typically determined by your target journal or institution — APA 7th edition is standard in psychology, education, and social sciences; Vancouver (numbered) style dominates health sciences journals; Chicago is common in humanities. What matters most is absolute consistency across all references and correct citation of methodological tools like PRISMA, PROSPERO, and quality appraisal instruments. Use a reference manager (Zotero, Endnote) to enforce consistency automatically across 50–300+ references.

Build Your Research Methodology Authority

A systematic literature review is the most demanding — and most rewarding — form of secondary research in academia. The researchers who do it well aren’t necessarily the most brilliant; they’re the most methodologically disciplined. That discipline is learnable.

To deepen your methodological foundations beyond the SLR specifically, these resources form a natural progression:

- 📚 Research Methodology Guide 2026 — the paradigm, design, and sampling context that underpins your SLR protocol decisions

- 📋 Research Methodology and Citation Standardisation — APA 7th, MLA 9th, Chicago, and Harvard applied to academic research reporting

- 🔁 Research Methodology Reproducibility Tips — transparent reporting, data management, and version control for systematic review teams

Share this guide with your research group, department mailing list, or doctoral programme coordinator. The researchers who bookmark it tend to be the ones who cite it later.

For a video introduction to these concepts, What Are Systematic Reviews? provides an accessible orientation for those new to the method.

Key References and Further Reading

- Page, M. J., McKenzie, J. E., Bossuyt, P. M., Boutron, I., Hoffmann, T. C., Mulrow, C. D., … & Moher, D. (2021). The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ, 372, n71. https://www.bmj.com/content/372/bmj.n71

- Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2023). Cochrane Handbook for Systematic Reviews of Interventions (Version 6.4). Cochrane. https://training.cochrane.org/handbook

- Rethlefsen, M. L., Kirtley, S., Waffenschmidt, S., Ayala, A. P., Moher, D., Page, M. J., & Koffel, J. (2021). PRISMA-S: an extension to the PRISMA statement for reporting literature searches in systematic reviews. Journal of the Medical Library Association, 109(2), 174–200.

- Polanin, J. R., Pigott, T. D., Espelage, D. L., & Grotpeter, J. K. (2019). Best practice guidelines for abstract screening large-evidence systematic reviews and meta-analyses. Research Synthesis Methods, 10(3), 330–342.

- Committee on Publication Ethics (COPE). (2019, July). Discussion document: Citation manipulation. COPE

A rigorous systematic literature review — one that honours research methodology and citation standards for higher education at every stage — is among the most enduring contributions a researcher can make. The checklist, the PRISMA framework, and the tools described here exist not to bureaucratise scholarship, but to protect it. Use them accordingly.

Last updated: 2026. This guide is reviewed annually to reflect updates to PRISMA reporting standards, database access, and citation style guides.

Leave a Reply